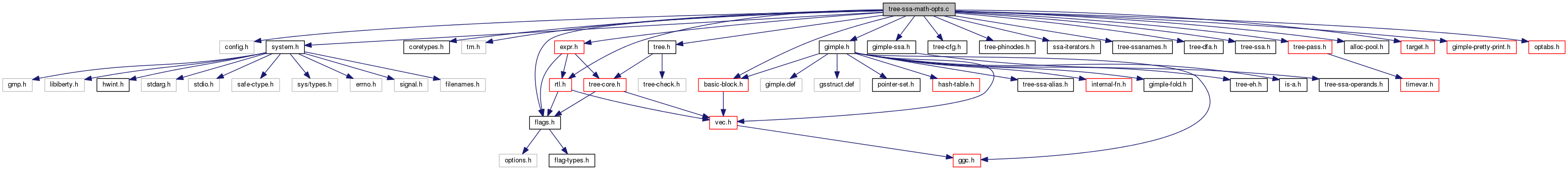

#include "config.h"#include "system.h"#include "coretypes.h"#include "tm.h"#include "flags.h"#include "tree.h"#include "gimple.h"#include "gimple-ssa.h"#include "tree-cfg.h"#include "tree-phinodes.h"#include "ssa-iterators.h"#include "tree-ssanames.h"#include "tree-dfa.h"#include "tree-ssa.h"#include "tree-pass.h"#include "alloc-pool.h"#include "basic-block.h"#include "target.h"#include "gimple-pretty-print.h"#include "rtl.h"#include "expr.h"#include "optabs.h"

Data Structures | |

| struct | occurrence |

| struct | symbolic_number |

Macros | |

| #define | POWI_MAX_MULTS (2*HOST_BITS_PER_WIDE_INT-2) |

| #define | POWI_TABLE_SIZE 256 |

| #define | POWI_WINDOW_SIZE 3 |

Variables | |

| struct { | |

| int rdivs_inserted | |

| int rfuncs_inserted | |

| } | reciprocal_stats |

| struct { | |

| int inserted | |

| } | sincos_stats |

| struct { | |

| int found_16bit | |

| int found_32bit | |

| int found_64bit | |

| } | bswap_stats |

| struct { | |

| int widen_mults_inserted | |

| int maccs_inserted | |

| int fmas_inserted | |

| } | widen_mul_stats |

| static struct occurrence * | occ_head |

| static alloc_pool | occ_pool |

| static const unsigned char | powi_table [POWI_TABLE_SIZE] |

Macro Definition Documentation

| #define POWI_MAX_MULTS (2*HOST_BITS_PER_WIDE_INT-2) |

To evaluate powi(x,n), the floating point value x raised to the constant integer exponent n, we use a hybrid algorithm that combines the "window method" with look-up tables. For an introduction to exponentiation algorithms and "addition chains", see section 4.6.3, "Evaluation of Powers" of Donald E. Knuth, "Seminumerical Algorithms", Vol. 2, "The Art of Computer Programming", 3rd Edition, 1998, and Daniel M. Gordon, "A Survey of Fast Exponentiation Methods", Journal of Algorithms, Vol. 27, pp. 129-146, 1998. Provide a default value for POWI_MAX_MULTS, the maximum number of multiplications to inline before calling the system library's pow function. powi(x,n) requires at worst 2*bits(n)-2 multiplications, so this default never requires calling pow, powf or powl.

| #define POWI_TABLE_SIZE 256 |

The size of the "optimal power tree" lookup table. All exponents less than this value are simply looked up in the powi_table below. This threshold is also used to size the cache of pseudo registers that hold intermediate results.

Referenced by powi_lookup_cost().

| #define POWI_WINDOW_SIZE 3 |

The size, in bits of the window, used in the "window method" exponentiation algorithm. This is equivalent to a radix of (1<<POWI_WINDOW_SIZE) in the corresponding "m-ary method".

Function Documentation

|

static |

Build a gimple binary operation with the given CODE and arguments ARG0, ARG1, assigning the result to a new SSA name for variable TARGET. Insert the statement prior to GSI's current position, and return the fresh SSA name.

|

static |

Build a gimple call statement that calls FN with argument ARG. Set the lhs of the call statement to a fresh SSA name. Insert the statement prior to GSI's current position, and return the fresh SSA name.

|

static |

Build a gimple assignment to cast VAL to TYPE. Insert the statement prior to GSI's current position, and return the fresh SSA name.

|

inlinestatic |

Build a gimple reference operation with the given CODE and argument ARG, assigning the result to a new SSA name of TYPE with NAME. Insert the statement prior to GSI's current position, and return the fresh SSA name.

|

static |

Compute the number of divisions that postdominate each block in OCC and its children.

|

static |

Combine the multiplication at MUL_STMT with operands MULOP1 and MULOP2 with uses in additions and subtractions to form fused multiply-add operations. Returns true if successful and MUL_STMT should be removed.

We don't want to do bitfield reduction ops.

If the target doesn't support it, don't generate it. We assume that if fma isn't available then fms, fnma or fnms are not either.

If the multiplication has zero uses, it is kept around probably because of -fnon-call-exceptions. Don't optimize it away in that case, it is DCE job.

Make sure that the multiplication statement becomes dead after the transformation, thus that all uses are transformed to FMAs. This means we assume that an FMA operation has the same cost as an addition.

For now restrict this operations to single basic blocks. In theory

we would want to support sinking the multiplication in

m = a*b;

if ()

ma = m + c;

else

d = m;

to form a fma in the then block and sink the multiplication to the

else block. A negate on the multiplication leads to FNMA.

Make sure the negate statement becomes dead with this

single transformation. Make sure the multiplication isn't also used on that stmt.

Re-validate.

FMA can only be formed from PLUS and MINUS.

If the subtrahend (gimple_assign_rhs2 (use_stmt)) is computed

by a MULT_EXPR that we'll visit later, we might be able to

get a more profitable match with fnma.

OTOH, if we don't, a negate / fma pair has likely lower latency

that a mult / subtract pair. We can't handle a * b + a * b.

While it is possible to validate whether or not the exact form

that we've recognized is available in the backend, the assumption

is that the transformation is never a loss. For instance, suppose

the target only has the plain FMA pattern available. Consider

a*b-c -> fma(a,b,-c): we've exchanged MUL+SUB for FMA+NEG, which

is still two operations. Consider -(a*b)-c -> fma(-a,b,-c): we

still have 3 operations, but in the FMA form the two NEGs are

independent and could be run in parallel. a * b - c -> a * b + (-c)

a - b * c -> (-b) * c + a

|

static |

Process a single gimple statement STMT, which has a MULT_EXPR as its rhs, and try to convert it into a WIDEN_MULT_EXPR. The return value is true iff we converted the statement.

We can use a signed multiply with unsigned types as long as

there is a wider mode to use, or it is the smaller of the two

types that is unsigned. Note that type1 >= type2, always.

Ensure that the inputs to the handler are in the correct precison for the opcode. This will be the full mode size. Handle constants.

References gimple_assign_rhs_code(), is_gimple_assign(), and SSA_NAME_DEF_STMT.

|

static |

Process a single gimple statement STMT, which is found at the iterator GSI and has a either a PLUS_EXPR or a MINUS_EXPR as its rhs (given by CODE), and try to convert it into a WIDEN_MULT_PLUS_EXPR or a WIDEN_MULT_MINUS_EXPR. The return value is true iff we converted the statement.

Allow for one conversion statement between the multiply and addition/subtraction statement. If there are more than one conversions then we assume they would invalidate this transformation. If that's not the case then they should have been folded before now.

If code is WIDEN_MULT_EXPR then it would seem unnecessary to call is_widening_mult_p, but we still need the rhs returns. It might also appear that it would be sufficient to use the existing operands of the widening multiply, but that would limit the choice of multiply-and-accumulate instructions. If the widened-multiplication result has more than one uses, it is probably wiser not to do the conversion.

There's no such thing as a mixed sign madd yet, so use a wider mode.

We can use a signed multiply with unsigned types as long as

there is a wider mode to use, or it is the smaller of the two

types that is unsigned. Note that type1 >= type2, always. If there was a conversion between the multiply and addition then we need to make sure it fits a multiply-and-accumulate. The should be a single mode change which does not change the value.

We use the original, unmodified data types for this.

Conversion is a truncate.

Conversion is an extend. Check it's the right sort.

else convert is a no-op for our purposes.

Verify that the machine can perform a widening multiply accumulate in this mode/signedness combination, otherwise this transformation is likely to pessimize code.

Ensure that the inputs to the handler are in the correct precison for the opcode. This will be the full mode size.

Handle constants.

References gimple_assign_lhs(), gimple_assign_rhs1(), TREE_TYPE, TYPE_PRECISION, and TYPE_UNSIGNED.

|

inlinestatic |

Perform a SHIFT or ROTATE operation by COUNT bits on symbolic number N. Return false if the requested operation is not permitted on a symbolic number.

Zero out the extra bits of N in order to avoid them being shifted into the significant bits.

Zero unused bits for size.

|

static |

Go through all the floating-point SSA_NAMEs, and call execute_cse_reciprocals_1 on each of them.

Scan for a/func(b) and convert it to reciprocal a*rfunc(b).

Check that all uses of the SSA name are divisions,

otherwise replacing the defining statement will do

the wrong thing.

|

static |

Look for floating-point divisions among DEF's uses, and try to replace them by multiplications with the reciprocal. Add as many statements computing the reciprocal as needed.

DEF must be a GIMPLE register of a floating-point type.

Do the expensive part only if we can hope to optimize something.

|

static |

Go through all calls to sin, cos and cexpi and call execute_cse_sincos_1 on the SSA_NAME argument of each of them. Also expand powi(x,n) into an optimal number of multiplies, when n is a constant.

Only the last stmt in a bb could throw, no need to call gimple_purge_dead_eh_edges if we change something in the middle of a basic block.

Make sure we have either sincos or cexp.

|

static |

Look for sin, cos and cexpi calls with the same argument NAME and create a single call to cexpi CSEing the result in this case. We first walk over all immediate uses of the argument collecting statements that we can CSE in a vector and in a second pass replace the statement rhs with a REALPART or IMAGPART expression on the result of the cexpi call we insert before the use statement that dominates all other candidates.

Simply insert cexpi at the beginning of top_bb but not earlier than the name def statement.

And adjust the recorded old call sites.

Replace call with a copy.

|

static |

Find manual byte swap implementations and turn them into a bswap builtin invokation.

Determine the argument type of the builtins. The code later on assumes that the return and argument type are the same.

We do a reverse scan for bswap patterns to make sure we get the

widest match. As bswap pattern matching doesn't handle

previously inserted smaller bswap replacements as sub-

patterns, the wider variant wouldn't be detected. Convert the src expression if necessary.

Convert the result if necessary.

|

static |

Find integer multiplications where the operands are extended from smaller types, and replace the MULT_EXPR with a WIDEN_MULT_EXPR where appropriate.

|

static |

Check if STMT completes a bswap implementation consisting of ORs, SHIFTs and ANDs. Return the source tree expression on which the byte swap is performed and NULL if no bswap was found.

The number which the find_bswap result should match in order to have a full byte swap. The number is shifted to the left according to the size of the symbolic number before using it.

The last parameter determines the depth search limit. It usually correlates directly to the number of bytes to be touched. We increase that number by three here in order to also cover signed -> unsigned converions of the src operand as can be seen in libgcc, and for initial shift/and operation of the src operand.

Zero out the extra bits of N and CMP.

A complete byte swap should make the symbolic number to start with the largest digit in the highest order byte.

References builtin_decl_explicit().

|

static |

find_bswap_1 invokes itself recursively with N and tries to perform the operation given by the rhs of STMT on the result. If the operation could successfully be executed the function returns the tree expression of the source operand and NULL otherwise.

Handle unary rhs and binary rhs with integer constants as second operand.

If find_bswap_1 returned NULL STMT is a leaf node and we have

to initialize the symbolic number. Set up the symbolic number N by setting each byte to a

value between 1 and the byte size of rhs1. The highest

order byte is set to n->size and the lowest order

byte to 1. Only constants masking full bytes are allowed.

If STMT casts to a smaller type mask out the bits not

belonging to the target type. Handle binary rhs.

References symbolic_number::n, NULL_TREE, symbolic_number::size, SSA_NAME_DEF_STMT, TREE_CODE, and verify_symbolic_number_p().

|

staticread |

Free OCC and return one more "struct occurrence" to be freed.

First get the two pointers hanging off OCC.

Now ensure that we don't recurse unless it is necessary.

References register_division_in().

|

static |

|

static |

We no longer require either sincos or cexp, since powi expansion piggybacks on this pass.

References BITS_PER_UNIT, gimple_expr_type(), symbolic_number::size, TREE_CODE, and TYPE_PRECISION.

|

static |

|

static |

|

static |

ARG is the argument to a cabs builtin call in GSI with location info LOC. Create a sequence of statements prior to GSI that calculates sqrt(R*R + I*I), where R and I are the real and imaginary components of ARG, respectively. Return an expression holding the result.

|

static |

ARG0 and ARG1 are the two arguments to a pow builtin call in GSI with location info LOC. If possible, create an equivalent and less expensive sequence of statements prior to GSI, and return an expession holding the result.

If the exponent isn't a constant, there's nothing of interest to be done.

If the exponent is equivalent to an integer, expand to an optimal multiplication sequence when profitable.

Attempt various optimizations using sqrt and cbrt.

Optimize pow(x,0.5) = sqrt(x). This replacement is always safe unless signed zeros must be maintained. pow(-0,0.5) = +0, while sqrt(-0) = -0.

Optimize pow(x,0.25) = sqrt(sqrt(x)). Assume on most machines that a builtin sqrt instruction is smaller than a call to pow with 0.25, so do this optimization even if -Os. Don't do this optimization if we don't have a hardware sqrt insn.

sqrt(x)

sqrt(sqrt(x))

Optimize pow(x,0.75) = sqrt(x) * sqrt(sqrt(x)) unless we are optimizing for space. Don't do this optimization if we don't have a hardware sqrt insn.

sqrt(x)

sqrt(sqrt(x))

sqrt(x) * sqrt(sqrt(x))

Optimize pow(x,1./3.) = cbrt(x). This requires unsafe math optimizations since 1./3. is not exactly representable. If x is negative and finite, the correct value of pow(x,1./3.) is a NaN with the "invalid" exception raised, because the value of 1./3. actually has an even denominator. The correct value of cbrt(x) is a negative real value.

Optimize pow(x,1./6.) = cbrt(sqrt(x)). Don't do this optimization if we don't have a hardware sqrt insn.

sqrt(x)

cbrt(sqrt(x))

Optimize pow(x,c), where n = 2c for some nonzero integer n and c not an integer, into sqrt(x) * powi(x, n/2), n > 0; 1.0 / (sqrt(x) * powi(x, abs(n/2))), n < 0. Do not calculate the powi factor when n/2 = 0.

Attempt to fold powi(arg0, abs(n/2)) into multiplies. If not

possible or profitable, give up. Skip the degenerate case when

n is 1 or -1, where the result is always 1. Calculate sqrt(x). When n is not 1 or -1, multiply it by the

result of the optimal multiply sequence just calculated. If n is negative, reciprocate the result.

Optimize pow(x,c), where 3c = n for some nonzero integer n, into powi(x, n/3) * powi(cbrt(x), n%3), n > 0; 1.0 / (powi(x, abs(n)/3) * powi(cbrt(x), abs(n)%3)), n < 0. Do not calculate the first factor when n/3 = 0. As cbrt(x) is different from pow(x, 1./3.) due to rounding and behavior with negative x, we need to constrain this transformation to unsafe math and positive x or finite math.

Attempt to fold powi(arg0, abs(n/3)) into multiplies. If not

possible or profitable, give up. Skip the degenerate case when

abs(n) < 3, where the result is always 1. Calculate powi(cbrt(x), n%3). Don't use gimple_expand_builtin_powi

as that creates an unnecessary variable. Instead, just produce

either cbrt(x) or cbrt(x) * cbrt(x). Multiply the two subexpressions, unless powi(x,abs(n)/3) = 1.

If n is negative, reciprocate the result.

No optimizations succeeded.

|

static |

ARG0 and N are the two arguments to a powi builtin in GSI with location info LOC. If the arguments are appropriate, create an equivalent sequence of statements prior to GSI using an optimal number of multiplications, and return an expession holding the result.

Avoid largest negative number.

References gimple_build_assign_with_ops(), gimple_set_location(), gsi_insert_before(), GSI_SAME_STMT, make_ssa_name(), NULL, and NULL_TREE.

|

static |

Insert NEW_OCC into our subset of the dominator tree. P_HEAD points to a list of "struct occurrence"s, one per basic block, having IDOM as their common dominator.

We try to insert NEW_OCC as deep as possible in the tree, and we also insert any other block that is a common dominator for BB and one block already in the tree.

BB dominates OCC_BB. OCC becomes NEW_OCC's child: remove OCC

from its list.

Try the next block (it may as well be dominated by BB).

OCC_BB dominates BB. Tail recurse to look deeper.

There is a dominator between IDOM and BB, add it and make

two children out of NEW_OCC and OCC. First, remove OCC from

its list. None of the previous blocks has DOM as a dominator: if we tail

recursed, we would reexamine them uselessly. Just switch BB with

DOM, and go on looking for blocks dominated by DOM. Nothing special, go on with the next element.

No place was found as a child of IDOM. Make BB a sibling of IDOM.

Referenced by occ_new().

|

static |

Walk the subset of the dominator tree rooted at OCC, setting the RECIP_DEF field to a definition of 1.0 / DEF that can be used in the given basic block. The field may be left NULL, of course, if it is not possible or profitable to do the optimization.

DEF_BSI is an iterator pointing at the statement defining DEF. If RECIP_DEF is set, a dominator already has a computation that can be used.

Make a variable with the replacement and substitute it.

Case 1: insert before an existing division.

Case 2: insert right after the definition. Note that this will

never happen if the definition statement can throw, because in

that case the sole successor of the statement's basic block will

dominate all the uses as well. Case 3: insert in a basic block not containing defs/uses.

References occurrence::bb, gsi_after_labels(), gsi_insert_before(), and GSI_SAME_STMT.

|

inlinestatic |

Return whether USE_STMT is a floating-point division by DEF.

Do not recognize x / x as valid division, as we are getting confused later by replacing all immediate uses x in such a stmt.

|

static |

Return true if STMT performs a widening multiplication, assuming the output type is TYPE. If so, store the unwidened types of the operands in *TYPE1_OUT and *TYPE2_OUT respectively. Also fill *RHS1_OUT and *RHS2_OUT such that converting those operands to types *TYPE1_OUT and *TYPE2_OUT would give the operands of the multiplication.

Ensure that the larger of the two operands comes first.

|

static |

Return true if RHS is a suitable operand for a widening multiplication, assuming a target type of TYPE. There are two cases:

- RHS makes some value at least twice as wide. Store that value in *NEW_RHS_OUT if so, and store its type in *TYPE_OUT.

- RHS is an integer constant. Store that value in *NEW_RHS_OUT if so, but leave *TYPE_OUT untouched.

| gimple_opt_pass* make_pass_cse_reciprocals | ( | ) |

| gimple_opt_pass* make_pass_cse_sincos | ( | ) |

| gimple_opt_pass* make_pass_optimize_bswap | ( | ) |

References int_fits_type_p(), and NULL.

| gimple_opt_pass* make_pass_optimize_widening_mul | ( | ) |

|

static |

Records an occurrence at statement USE_STMT in the vector of trees STMTS if it is dominated by *TOP_BB or dominates it or this basic block is not yet initialized. Returns true if the occurrence was pushed on the vector. Adjusts *TOP_BB to be the basic block dominating all statements in the vector.

References CASE_FLT_FN.

|

staticread |

Allocate and return a new struct occurrence for basic block BB, and whose children list is headed by CHILDREN.

References occurrence::bb, CDI_DOMINATORS, occurrence::children, insert_bb(), nearest_common_dominator(), occurrence::next, and NULL.

|

static |

Convert ARG0**N to a tree of multiplications of ARG0 with itself. This function needs to be kept in sync with powi_cost above.

If the original exponent was negative, reciprocate the result.

|

static |

Recursive subroutine of powi_as_mults. This function takes the array, CACHE, of already calculated exponents and an exponent N and returns a tree that corresponds to CACHE[1]**N, with type TYPE.

Referenced by powi_lookup_cost().

|

static |

Return the number of multiplications required to calculate powi(x,n) for an arbitrary x, given the exponent N. This function needs to be kept in sync with powi_as_mults below.

Ignore the reciprocal when calculating the cost.

Initialize the exponent cache.

|

static |

Return the number of multiplications required to calculate powi(x,n) where n is less than POWI_TABLE_SIZE. This is a subroutine of powi_cost. CACHE is an array indicating which exponents have already been calculated.

If we've already calculated this exponent, then this evaluation doesn't require any additional multiplications.

References HOST_WIDE_INT, make_temp_ssa_name(), NULL, powi_as_mults_1(), powi_table, and POWI_TABLE_SIZE.

|

inlinestatic |

Register that we found a division in BB.

Referenced by free_bb().

|

inlinestatic |

Replace the division at USE_P with a multiplication by the reciprocal, if possible.

|

inlinestatic |

Perform sanity checking for the symbolic number N and the gimple statement STMT.

Referenced by find_bswap_1().

|

static |

Return true if stmt is a type conversion operation that can be stripped when used in a widening multiply operation.

If the type of OP has the same precision as the result, then

we can strip this conversion. The multiply operation will be

selected to create the correct extension as a by-product.

We can also strip a conversion if it preserves the signed-ness of

the operation and doesn't narrow the range. If the inner-most type is unsigned, then we can strip any

intermediate widening operation. If it's signed, then the

intermediate widening operation must also be signed.

References int_fits_type_p().

Variable Documentation

| struct { ... } bswap_stats |

| int fmas_inserted |

Number of fp fused multiply-add ops inserted.

| int found_16bit |

Number of hand-written 16-bit bswaps found.

| int found_32bit |

Number of hand-written 32-bit bswaps found.

| int found_64bit |

Number of hand-written 64-bit bswaps found.

| int inserted |

Number of cexpi calls inserted.

Referenced by copy_edges_for_bb().

| int maccs_inserted |

Number of integer multiply-and-accumulate ops inserted.

|

static |

The instance of "struct occurrence" representing the highest interesting block in the dominator tree.

|

static |

Allocation pool for getting instances of "struct occurrence".

|

static |

The following table is an efficient representation of an "optimal power tree". For each value, i, the corresponding value, j, in the table states than an optimal evaluation sequence for calculating pow(x,i) can be found by evaluating pow(x,j)*pow(x,i-j). An optimal power tree for the first 100 integers is given in Knuth's "Seminumerical algorithms".

Referenced by powi_lookup_cost().

| int rdivs_inserted |

Number of 1.0/X ops inserted.

| struct { ... } reciprocal_stats |

| int rfuncs_inserted |

Number of 1.0/FUNC ops inserted.

| struct { ... } sincos_stats |

| struct { ... } widen_mul_stats |

| int widen_mults_inserted |

Number of widening multiplication ops inserted.