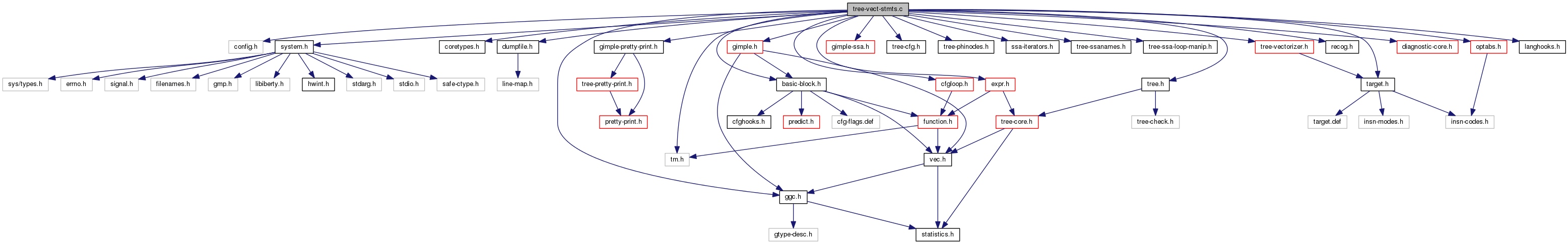

#include "config.h"#include "system.h"#include "coretypes.h"#include "dumpfile.h"#include "tm.h"#include "ggc.h"#include "tree.h"#include "target.h"#include "basic-block.h"#include "gimple-pretty-print.h"#include "gimple.h"#include "gimple-ssa.h"#include "tree-cfg.h"#include "tree-phinodes.h"#include "ssa-iterators.h"#include "tree-ssanames.h"#include "tree-ssa-loop-manip.h"#include "cfgloop.h"#include "expr.h"#include "recog.h"#include "optabs.h"#include "diagnostic-core.h"#include "tree-vectorizer.h"#include "langhooks.h"

Variables | |

| unsigned int | current_vector_size |

Function Documentation

|

static |

PTR is a pointer to an array of type TYPE. Return a representation of *PTR. The memory reference replaces those in FIRST_DR (and its group).

Arrays have the same alignment as their type.

|

static |

Return a variable of type ELEM_TYPE[NELEMS].

References gcc_assert, TREE_CODE, and TREE_TYPE.

|

static |

A helper function to ensure data reference DR's base alignment for STMT_INFO.

|

static |

Function exist_non_indexing_operands_for_use_p

USE is one of the uses attached to STMT. Check if USE is used in STMT for anything other than indexing an array.

USE corresponds to some operand in STMT. If there is no data reference in STMT, then any operand that corresponds to USE is not indexing an array.

STMT has a data_ref. FORNOW this means that its of one of the following forms: -1- ARRAY_REF = var -2- var = ARRAY_REF (This should have been verified in analyze_data_refs). 'var' in the second case corresponds to a def, not a use, so USE cannot correspond to any operands that are not used for array indexing. Therefore, all we need to check is if STMT falls into the first case, and whether var corresponds to USE.

| void free_stmt_vec_info | ( | ) |

Free stmt vectorization related info.

Check if this statement has a related "pattern stmt" (introduced by the vectorizer during the pattern recognition pass). Free pattern's stmt_vec_info and def stmt's stmt_vec_info too.

| void free_stmt_vec_info_vec | ( | void | ) |

Free hash table for stmt_vec_info.

| tree get_same_sized_vectype | ( | ) |

Function get_same_sized_vectype

Returns a vector type corresponding to SCALAR_TYPE of size VECTOR_TYPE if supported by the target.

| tree get_vectype_for_scalar_type | ( | ) |

Function get_vectype_for_scalar_type.

Returns the vector type corresponding to SCALAR_TYPE as supported by the target.

Referenced by get_initial_def_for_reduction(), vect_build_slp_tree_1(), and vect_get_vec_def_for_operand().

|

static |

Function get_vectype_for_scalar_type_and_size.

Returns the vector type corresponding to SCALAR_TYPE and SIZE as supported by the target.

For vector types of elements whose mode precision doesn't match their types precision we use a element type of mode precision. The vectorization routines will have to make sure they support the proper result truncation/extension. We also make sure to build vector types with INTEGER_TYPE component type only.

We shouldn't end up building VECTOR_TYPEs of non-scalar components. When the component mode passes the above test simply use a type corresponding to that mode. The theory is that any use that would cause problems with this will disable vectorization anyway.

We can't build a vector type of elements with alignment bigger than their size.

If we felt back to using the mode fail if there was no scalar type for it.

If no size was supplied use the mode the target prefers. Otherwise lookup a vector mode of the specified size.

| void init_stmt_vec_info_vec | ( | void | ) |

Create a hash table for stmt_vec_info.

| stmt_vec_info new_stmt_vec_info | ( | gimple | stmt, |

| loop_vec_info | loop_vinfo, | ||

| bb_vec_info | bb_vinfo | ||

| ) |

Function new_stmt_vec_info.

Create and initialize a new stmt_vec_info struct for STMT.

Referenced by vect_create_epilog_for_reduction(), and vect_make_slp_decision().

|

static |

Given a vector type VECTYPE returns the VECTOR_CST mask that implements reversal of the vector elements. If that is impossible to do, returns NULL.

References dump_enabled_p(), dump_printf_loc(), MSG_MISSED_OPTIMIZATION, and vect_location.

|

static |

Given a vector variable X and Y, that was generated for the scalar STMT, generate instructions to permute the vector elements of X and Y using permutation mask MASK_VEC, insert them at *GSI and return the permuted vector variable.

Generate the permute statement.

|

static |

case 1: we are only interested in uses that need to be vectorized. Uses that are used for address computation are not considered relevant.

case 2: A reduction phi (STMT) defined by a reduction stmt (DEF_STMT). DEF_STMT must have already been processed, because this should be the only way that STMT, which is a reduction-phi, was put in the worklist, as there should be no other uses for DEF_STMT in the loop. So we just check that everything is as expected, and we are done.

case 3a: outer-loop stmt defining an inner-loop stmt:

outer-loop-header-bb:

d = def_stmt

inner-loop:

stmt # use (d)

outer-loop-tail-bb:

... case 3b: inner-loop stmt defining an outer-loop stmt:

outer-loop-header-bb:

...

inner-loop:

d = def_stmt

outer-loop-tail-bb (or outer-loop-exit-bb in double reduction):

stmt # use (d)

|

static |

ARRAY is an array of vectors created by create_vector_array. Return an SSA_NAME for the vector in index N. The reference is part of the vectorization of STMT and the vector is associated with scalar destination SCALAR_DEST.

| unsigned record_stmt_cost | ( | stmt_vector_for_cost * | body_cost_vec, |

| int | count, | ||

| enum vect_cost_for_stmt | kind, | ||

| stmt_vec_info | stmt_info, | ||

| int | misalign, | ||

| enum vect_cost_model_location | where | ||

| ) |

Record the cost of a statement, either by directly informing the target model or by saving it in a vector for later processing. Return a preliminary estimate of the statement's cost.

References add_stmt_info_to_vec(), builtin_vectorization_cost(), count, NULL, NULL_TREE, stmt_vectype(), and STMT_VINFO_STMT.

Referenced by vect_get_store_cost(), and vect_model_store_cost().

| bool stmt_in_inner_loop_p | ( | ) |

Return TRUE iff the given statement is in an inner loop relative to the loop being vectorized.

| tree stmt_vectype | ( | ) |

Statement Analysis and Transformation for Vectorization Copyright (C) 2003-2013 Free Software Foundation, Inc. Contributed by Dorit Naishlos dorit@il.ibm.com and Ira Rosen irar@il.ibm.com

This file is part of GCC.

GCC is free software; you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation; either version 3, or (at your option) any later version.

GCC is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

You should have received a copy of the GNU General Public License along with GCC; see the file COPYING3. If not see http://www.gnu.org/licenses/. For lang_hooks.types.type_for_mode. Return the vectorized type for the given statement.

| bool supportable_narrowing_operation | ( | enum tree_code | code, |

| tree | vectype_out, | ||

| tree | vectype_in, | ||

| enum tree_code * | code1, | ||

| int * | multi_step_cvt, | ||

| vec< tree > * | interm_types | ||

| ) |

Function supportable_narrowing_operation

Check whether an operation represented by the code CODE is a narrowing operation that is supported by the target platform in vector form (i.e., when operating on arguments of type VECTYPE_IN and producing a result of type VECTYPE_OUT).

Narrowing operations we currently support are NOP (CONVERT) and FIX_TRUNC. This function checks if these operations are supported by the target platform directly via vector tree-codes.

Output:

- CODE1 is the code of a vector operation to be used when vectorizing the operation, if available.

- MULTI_STEP_CVT determines the number of required intermediate steps in case of multi-step conversion (like int->short->char - in that case MULTI_STEP_CVT will be 1).

- INTERM_TYPES contains the intermediate type required to perform the narrowing operation (short in the above example).

??? Not yet implemented due to missing VEC_PACK_FLOAT_EXPR

tree code and optabs used for computing the operation.

The signedness is determined from output operand.

Check if it's a multi-step conversion that can be done using intermediate types.

For multi-step FIX_TRUNC_EXPR prefer signed floating to integer conversion over unsigned, as unsigned FIX_TRUNC_EXPR is often more costly than signed.

We assume here that there will not be more than MAX_INTERM_CVT_STEPS intermediate steps in promotion sequence. We try MAX_INTERM_CVT_STEPS to get to NARROW_VECTYPE, and fail if we do not.

| bool supportable_widening_operation | ( | enum tree_code | code, |

| gimple | stmt, | ||

| tree | vectype_out, | ||

| tree | vectype_in, | ||

| enum tree_code * | code1, | ||

| enum tree_code * | code2, | ||

| int * | multi_step_cvt, | ||

| vec< tree > * | interm_types | ||

| ) |

Function supportable_widening_operation

Check whether an operation represented by the code CODE is a widening operation that is supported by the target platform in vector form (i.e., when operating on arguments of type VECTYPE_IN producing a result of type VECTYPE_OUT).

Widening operations we currently support are NOP (CONVERT), FLOAT and WIDEN_MULT. This function checks if these operations are supported by the target platform either directly (via vector tree-codes), or via target builtins.

Output:

- CODE1 and CODE2 are codes of vector operations to be used when vectorizing the operation, if available.

- MULTI_STEP_CVT determines the number of required intermediate steps in case of multi-step conversion (like char->short->int - in that case MULTI_STEP_CVT will be 1).

- INTERM_TYPES contains the intermediate type required to perform the widening operation (short in the above example).

The result of a vectorized widening operation usually requires

two vectors (because the widened results do not fit into one vector).

The generated vector results would normally be expected to be

generated in the same order as in the original scalar computation,

i.e. if 8 results are generated in each vector iteration, they are

to be organized as follows:

vect1: [res1,res2,res3,res4],

vect2: [res5,res6,res7,res8].

However, in the special case that the result of the widening

operation is used in a reduction computation only, the order doesn't

matter (because when vectorizing a reduction we change the order of

the computation). Some targets can take advantage of this and

generate more efficient code. For example, targets like Altivec,

that support widen_mult using a sequence of {mult_even,mult_odd}

generate the following vectors:

vect1: [res1,res3,res5,res7],

vect2: [res2,res4,res6,res8].

When vectorizing outer-loops, we execute the inner-loop sequentially

(each vectorized inner-loop iteration contributes to VF outer-loop

iterations in parallel). We therefore don't allow to change the

order of the computation in the inner-loop during outer-loop

vectorization.

TODO: Another case in which order doesn't *really* matter is when we

widen and then contract again, e.g. (short)((int)x * y >> 8).

Normally, pack_trunc performs an even/odd permute, whereas the

repack from an even/odd expansion would be an interleave, which

would be significantly simpler for e.g. AVX2. In any case, in order to avoid duplicating the code below, recurse

on VEC_WIDEN_MULT_EVEN_EXPR. If it succeeds, all the return values

are properly set up for the caller. If we fail, we'll continue with

a VEC_WIDEN_MULT_LO/HI_EXPR check. Support the recursion induced just above.

??? Not yet implemented due to missing VEC_UNPACK_FIX_TRUNC_HI_EXPR/

VEC_UNPACK_FIX_TRUNC_LO_EXPR tree codes and optabs used for

computing the operation. The signedness is determined from output operand.

Check if it's a multi-step conversion that can be done using intermediate types.

We assume here that there will not be more than MAX_INTERM_CVT_STEPS intermediate steps in promotion sequence. We try MAX_INTERM_CVT_STEPS to get to NARROW_VECTYPE, and fail if we do not.

References insn_data, insn_data_d::operand, optab_default, optab_for_tree_code(), optab_handler(), lang_hooks_for_types::type_for_mode, TYPE_MODE, and lang_hooks::types.

| bool vect_analyze_stmt | ( | ) |

Make sure the statement is vectorizable.

Skip stmts that do not need to be vectorized. In loops this is expected to include: - the COND_EXPR which is the loop exit condition - any LABEL_EXPRs in the loop - computations that are used only for array indexing or loop control. In basic blocks we only analyze statements that are a part of some SLP instance, therefore, all the statements are relevant. Pattern statement needs to be analyzed instead of the original statement if the original statement is not relevant. Otherwise, we analyze both statements. In basic blocks we are called from some SLP instance traversal, don't analyze pattern stmts instead, the pattern stmts already will be part of SLP instance.

Analyze PATTERN_STMT instead of the original stmt.

Analyze PATTERN_STMT too.

Analyze def stmt of STMT if it's a pattern stmt.

Stmts that are (also) "live" (i.e. - that are used out of the loop) need extra handling, except for vectorizable reductions.

References assignment_vec_info_type, call_vec_info_type, condition_vec_info_type, dump_enabled_p(), dump_printf_loc(), gcc_assert, gcc_unreachable, gsi_stmt(), induc_vec_info_type, load_vec_info_type, MSG_MISSED_OPTIMIZATION, NULL, op_vec_info_type, reduc_vec_info_type, shift_vec_info_type, STMT_VINFO_GROUPED_ACCESS, STMT_VINFO_LIVE_P, STMT_VINFO_VEC_STMT, store_vec_info_type, type_conversion_vec_info_type, type_demotion_vec_info_type, type_promotion_vec_info_type, vect_location, vectorizable_assignment(), vectorizable_call(), vectorizable_condition(), vectorizable_conversion(), vectorizable_induction(), vectorizable_load(), vectorizable_operation(), vectorizable_reduction(), vectorizable_shift(), and vectorizable_store().

|

static |

Function vect_cost_group_size

For grouped load or store, return the group_size only if it is the first load or store of a group, else return 1. This ensures that group size is only returned once per group.

Referenced by vect_get_store_cost().

|

static |

Create vectorized demotion statements for vector operands from VEC_OPRNDS. For multi-step conversions store the resulting vectors and call the function recursively.

Create demotion operation.

Store the resulting vector for next recursive call.

This is the last step of the conversion sequence. Store the

vectors in SLP_NODE or in vector info of the scalar statement

(or in STMT_VINFO_RELATED_STMT chain). For multi-step demotion operations we first generate demotion operations from the source type to the intermediate types, and then combine the results (stored in VEC_OPRNDS) in demotion operation to the destination type.

At each level of recursion we have half of the operands we had at the

previous level.

References CONVERT_EXPR_CODE_P, gimple_assign_lhs(), gimple_assign_rhs1(), gimple_assign_rhs_code(), is_gimple_assign(), NULL, NULL_TREE, STMT_VINFO_BB_VINFO, STMT_VINFO_DEF_TYPE, STMT_VINFO_LOOP_VINFO, STMT_VINFO_RELEVANT_P, STMT_VINFO_VECTYPE, TREE_CODE, TREE_CODE_LENGTH, TREE_TYPE, vect_internal_def, vect_unknown_def_type, vinfo_for_stmt(), and vNULL.

|

static |

Create vectorized promotion statements for vector operands from VEC_OPRNDS0 and VEC_OPRNDS1 (for binary operations). For multi-step conversions store the resulting vectors and call the function recursively.

Generate the two halves of promotion operation.

Store the results for the next step.

References dump_enabled_p(), dump_printf_loc(), MSG_MISSED_OPTIMIZATION, and vect_location.

| void vect_finish_stmt_generation | ( | gimple | stmt, |

| gimple | vec_stmt, | ||

| gimple_stmt_iterator * | gsi | ||

| ) |

Function vect_finish_stmt_generation.

Insert a new stmt.

If we have an SSA vuse and insert a store, update virtual SSA form to avoid triggering the renamer. Do so only if we can easily see all uses - which is what almost always happens with the way vectorized stmts are inserted.

References gimple_call_internal_fn(), gimple_call_internal_p(), gimple_call_lhs(), gimple_call_num_args(), is_gimple_call(), NULL, NULL_TREE, stmt_can_throw_internal(), STMT_VINFO_BB_VINFO, STMT_VINFO_DEF_TYPE, STMT_VINFO_LOOP_VINFO, STMT_VINFO_RELEVANT_P, STMT_VINFO_VECTYPE, TREE_CODE, type(), vect_internal_def, vect_unknown_def_type, vinfo_for_stmt(), and vNULL.

| tree vect_gen_perm_mask | ( | ) |

Given a vector type VECTYPE and permutation SEL returns the VECTOR_CST mask that implements the permutation of the vector elements. If that is impossible to do, returns NULL.

References dump_enabled_p(), dump_printf_loc(), MSG_MISSED_OPTIMIZATION, and vect_location.

|

static |

Function vect_gen_widened_results_half

Create a vector stmt whose code, type, number of arguments, and result variable are CODE, OP_TYPE, and VEC_DEST, and its arguments are VEC_OPRND0 and VEC_OPRND1. The new vector stmt is to be inserted at BSI. In the case that CODE is a CALL_EXPR, this means that a call to DECL needs to be created (DECL is a function-decl of a target-builtin). STMT is the original scalar stmt that we are vectorizing.

Generate half of the widened result:

Target specific support

Generic support

References SLP_TREE_VEC_STMTS, STMT_VINFO_RELATED_STMT, STMT_VINFO_VEC_STMT, and vinfo_for_stmt().

Referenced by vect_get_loop_based_defs().

| void vect_get_load_cost | ( | struct data_reference * | dr, |

| int | ncopies, | ||

| bool | add_realign_cost, | ||

| unsigned int * | inside_cost, | ||

| unsigned int * | prologue_cost, | ||

| stmt_vector_for_cost * | prologue_cost_vec, | ||

| stmt_vector_for_cost * | body_cost_vec, | ||

| bool | record_prologue_costs | ||

| ) |

Calculate cost of DR's memory access.

Here, we assign an additional cost for the unaligned load.

FIXME: If the misalignment remains fixed across the iterations of

the containing loop, the following cost should be added to the

prologue costs. Unaligned software pipeline has a load of an address, an initial

load, and possibly a mask operation to "prime" the loop. However,

if this is an access in a group of loads, which provide grouped

access, then the above cost should only be considered for one

access in the group. Inside the loop, there is a load op

and a realignment op.

References targetm.

|

static |

Get vectorized definitions for loop-based vectorization. For the first operand we call vect_get_vec_def_for_operand() (with OPRND containing scalar operand), and for the rest we get a copy with vect_get_vec_def_for_stmt_copy() using the previous vector definition (stored in OPRND). See vect_get_vec_def_for_stmt_copy() for details. The vectors are collected into VEC_OPRNDS.

Get first vector operand.

All the vector operands except the very first one (that is scalar oprnd) are stmt copies.

Get second vector operand.

For conversion in multiple steps, continue to get operands recursively.

References binary_op, FOR_EACH_VEC_ELT, gimple_assign_lhs(), gimple_call_lhs(), is_gimple_call(), NULL_TREE, vect_gen_widened_results_half(), and vNULL.

| void vect_get_store_cost | ( | struct data_reference * | dr, |

| int | ncopies, | ||

| unsigned int * | inside_cost, | ||

| stmt_vector_for_cost * | body_cost_vec | ||

| ) |

Calculate cost of DR's memory access.

Here, we assign an additional cost for the unaligned store.

References dump_enabled_p(), dump_printf_loc(), exact_log2(), first_stmt(), GROUP_FIRST_ELEMENT, MSG_NOTE, PURE_SLP_STMT, record_stmt_cost(), STMT_VINFO_DATA_REF, STMT_VINFO_GROUPED_ACCESS, STMT_VINFO_STRIDE_LOAD_P, STMT_VINFO_VECTYPE, TYPE_VECTOR_SUBPARTS, vec_perm, vect_body, vect_cost_group_size(), vect_location, and vinfo_for_stmt().

| tree vect_get_vec_def_for_operand | ( | ) |

Function vect_get_vec_def_for_operand.

OP is an operand in STMT. This function returns a (vector) def that will be used in the vectorized stmt for STMT.

In the case that OP is an SSA_NAME which is defined in the loop, then STMT_VINFO_VEC_STMT of the defining stmt holds the relevant def.

In case OP is an invariant or constant, a new stmt that creates a vector def needs to be introduced.

Case 1: operand is a constant.

Create 'vect_cst_ = {cst,cst,...,cst}' Case 2: operand is defined outside the loop - loop invariant.

Create 'vec_inv = {inv,inv,..,inv}' Case 3: operand is defined inside the loop.

Get the def from the vectorized stmt.

Get vectorized pattern statement.

Case 4: operand is defined by a loop header phi - reduction

Get the def before the loop

Case 5: operand is defined by loop-header phi - induction.

Get the def from the vectorized stmt.

References dump_enabled_p(), dump_printf_loc(), gcc_assert, get_vectype_for_scalar_type(), MSG_NOTE, NULL, TREE_TYPE, vect_init_vector(), and vect_location.

Referenced by get_initial_def_for_reduction().

| tree vect_get_vec_def_for_stmt_copy | ( | ) |

Function vect_get_vec_def_for_stmt_copy

Return a vector-def for an operand. This function is used when the vectorized stmt to be created (by the caller to this function) is a "copy" created in case the vectorized result cannot fit in one vector, and several copies of the vector-stmt are required. In this case the vector-def is retrieved from the vector stmt recorded in the STMT_VINFO_RELATED_STMT field of the stmt that defines VEC_OPRND. DT is the type of the vector def VEC_OPRND.

Context: In case the vectorization factor (VF) is bigger than the number of elements that can fit in a vectype (nunits), we have to generate more than one vector stmt to vectorize the scalar stmt. This situation arises when there are multiple data-types operated upon in the loop; the smallest data-type determines the VF, and as a result, when vectorizing stmts operating on wider types we need to create 'VF/nunits' "copies" of the vector stmt (each computing a vector of 'nunits' results, and together computing 'VF' results in each iteration). This function is called when vectorizing such a stmt (e.g. vectorizing S2 in the illustration below, in which VF=16 and nunits=4, so the number of copies required is 4):

scalar stmt: vectorized into: STMT_VINFO_RELATED_STMT

S1: x = load VS1.0: vx.0 = memref0 VS1.1 VS1.1: vx.1 = memref1 VS1.2 VS1.2: vx.2 = memref2 VS1.3 VS1.3: vx.3 = memref3

S2: z = x + ... VSnew.0: vz0 = vx.0 + ... VSnew.1 VSnew.1: vz1 = vx.1 + ... VSnew.2 VSnew.2: vz2 = vx.2 + ... VSnew.3 VSnew.3: vz3 = vx.3 + ...

The vectorization of S1 is explained in vectorizable_load. The vectorization of S2: To create the first vector-stmt out of the 4 copies - VSnew.0 - the function 'vect_get_vec_def_for_operand' is called to get the relevant vector-def for each operand of S2. For operand x it returns the vector-def 'vx.0'.

To create the remaining copies of the vector-stmt (VSnew.j), this

function is called to get the relevant vector-def for each operand. It is obtained from the respective VS1.j stmt, which is recorded in the STMT_VINFO_RELATED_STMT field of the stmt that defines VEC_OPRND.

For example, to obtain the vector-def 'vx.1' in order to create the

vector stmt 'VSnew.1', this function is called with VEC_OPRND='vx.0'. Given 'vx0' we obtain the stmt that defines it ('VS1.0'); from the STMT_VINFO_RELATED_STMT field of 'VS1.0' we obtain the next copy - 'VS1.1', and return its def ('vx.1'). Overall, to create the above sequence this function will be called 3 times: vx.1 = vect_get_vec_def_for_stmt_copy (dt, vx.0); vx.2 = vect_get_vec_def_for_stmt_copy (dt, vx.1); vx.3 = vect_get_vec_def_for_stmt_copy (dt, vx.2);

Do nothing; can reuse same def.

Referenced by get_initial_def_for_reduction(), and vect_create_epilog_for_reduction().

| void vect_get_vec_defs | ( | tree | op0, |

| tree | op1, | ||

| gimple | stmt, | ||

| vec< tree > * | vec_oprnds0, | ||

| vec< tree > * | vec_oprnds1, | ||

| slp_tree | slp_node, | ||

| int | reduc_index | ||

| ) |

Get vectorized definitions for OP0 and OP1. REDUC_INDEX is the index of reduction operand in case of reduction, and -1 otherwise.

Referenced by get_initial_def_for_reduction().

|

static |

Get vectorized definitions for the operands to create a copy of an original stmt. See vect_get_vec_def_for_stmt_copy () for details.

References copy_ssa_name(), ECF_CONST, ECF_NOVOPS, ECF_PURE, gimple_assign_lhs(), gimple_call_flags(), gimple_set_vdef(), gimple_set_vuse(), gimple_vdef(), gimple_vuse(), gimple_vuse_op(), gsi_stmt(), is_gimple_assign(), is_gimple_call(), is_gimple_reg(), SET_USE, and TREE_CODE.

| tree vect_init_vector | ( | ) |

Function vect_init_vector.

Insert a new stmt (INIT_STMT) that initializes a new variable of type TYPE with the value VAL. If TYPE is a vector type and VAL does not have vector type a vector with all elements equal to VAL is created first. Place the initialization at BSI if it is not NULL. Otherwise, place the initialization at the loop preheader. Return the DEF of INIT_STMT. It will be used in the vectorization of STMT.

Referenced by vect_get_vec_def_for_operand().

|

static |

Insert the new stmt NEW_STMT at *GSI or at the appropriate place in the loop preheader for the vectorized stmt STMT.

References build_vector_from_val(), CONSTANT_CLASS_P, fold_unary, gimple_build_assign_with_ops(), make_ssa_name(), NULL, NULL_TREE, TREE_TYPE, and types_compatible_p().

|

static |

Function vect_is_simple_cond.

Input: LOOP - the loop that is being vectorized. COND - Condition that is checked for simple use.

Output: *COMP_VECTYPE - the vector type for the comparison.

Returns whether a COND can be vectorized. Checks whether condition operands are supportable using vec_is_simple_use.

| bool vect_is_simple_use | ( | tree | operand, |

| gimple | stmt, | ||

| loop_vec_info | loop_vinfo, | ||

| bb_vec_info | bb_vinfo, | ||

| gimple * | def_stmt, | ||

| tree * | def, | ||

| enum vect_def_type * | dt | ||

| ) |

Function vect_is_simple_use.

Input: LOOP_VINFO - the vect info of the loop that is being vectorized. BB_VINFO - the vect info of the basic block that is being vectorized. OPERAND - operand of STMT in the loop or bb. DEF - the defining stmt in case OPERAND is an SSA_NAME.

Returns whether a stmt with OPERAND can be vectorized. For loops, supportable operands are constants, loop invariants, and operands that are defined by the current iteration of the loop. Unsupportable operands are those that are defined by a previous iteration of the loop (as is the case in reduction/induction computations). For basic blocks, supportable operands are constants and bb invariants. For now, operands defined outside the basic block are not supported.

Empty stmt is expected only in case of a function argument. (Otherwise - we expect a phi_node or a GIMPLE_ASSIGN).

FALLTHRU

| bool vect_is_simple_use_1 | ( | tree | operand, |

| gimple | stmt, | ||

| loop_vec_info | loop_vinfo, | ||

| bb_vec_info | bb_vinfo, | ||

| gimple * | def_stmt, | ||

| tree * | def, | ||

| enum vect_def_type * | dt, | ||

| tree * | vectype | ||

| ) |

Function vect_is_simple_use_1.

Same as vect_is_simple_use_1 but also determines the vector operand type of OPERAND and stores it to *VECTYPE. If the definition of OPERAND is vect_uninitialized_def, vect_constant_def or vect_external_def *VECTYPE will be set to NULL_TREE and the caller is responsible to compute the best suited vector type for the scalar operand.

Now get a vector type if the def is internal, otherwise supply NULL_TREE and leave it up to the caller to figure out a proper type for the use stmt.

|

static |

Utility functions used by vect_mark_stmts_to_be_vectorized. Function vect_mark_relevant.

Mark STMT as "relevant for vectorization" and add it to WORKLIST.

If this stmt is an original stmt in a pattern, we might need to mark its related pattern stmt instead of the original stmt. However, such stmts may have their own uses that are not in any pattern, in such cases the stmt itself should be marked.

This use is out of pattern use, if LHS has other uses that are

pattern uses, we should mark the stmt itself, and not the pattern

stmt. This is the last stmt in a sequence that was detected as a

pattern that can potentially be vectorized. Don't mark the stmt

as relevant/live because it's not going to be vectorized.

Instead mark the pattern-stmt that replaces it.

References dump_enabled_p(), dump_printf_loc(), flow_bb_inside_loop_p(), FOR_EACH_IMM_USE_FAST, gcc_assert, gimple_assign_lhs(), gimple_call_lhs(), is_gimple_assign(), is_gimple_debug(), LOOP_VINFO_LOOP, MSG_NOTE, STMT_VINFO_IN_PATTERN_P, STMT_VINFO_LIVE_P, STMT_VINFO_LOOP_VINFO, STMT_VINFO_RELATED_STMT, STMT_VINFO_RELEVANT, TREE_CODE, USE_STMT, vect_location, and vinfo_for_stmt().

| bool vect_mark_stmts_to_be_vectorized | ( | ) |

Function vect_mark_stmts_to_be_vectorized.

Not all stmts in the loop need to be vectorized. For example:

for i... for j...

- T0 = i + j

- T1 = a[T0]

- j = j + 1

Stmt 1 and 3 do not need to be vectorized, because loop control and addressing of vectorized data-refs are handled differently.

This pass detects such stmts.

1. Init worklist.

2. Process_worklist

Examine the USEs of STMT. For each USE, mark the stmt that defines it

(DEF_STMT) as relevant/irrelevant and live/dead according to the

liveness and relevance properties of STMT. Generally, the liveness and relevance properties of STMT are

propagated as is to the DEF_STMTs of its USEs:

live_p <– STMT_VINFO_LIVE_P (STMT_VINFO)

relevant <– STMT_VINFO_RELEVANT (STMT_VINFO)

One exception is when STMT has been identified as defining a reduction

variable; in this case we set the liveness/relevance as follows:

live_p = false

relevant = vect_used_by_reduction

This is because we distinguish between two kinds of relevant stmts -

those that are used by a reduction computation, and those that are

(also) used by a regular computation. This allows us later on to

identify stmts that are used solely by a reduction, and therefore the

order of the results that they produce does not have to be kept. fall through

Pattern statements are not inserted into the code, so

FOR_EACH_PHI_OR_STMT_USE optimizes their operands out, and we

have to scan the RHS or function arguments instead.

| void vect_model_load_cost | ( | stmt_vec_info | stmt_info, |

| int | ncopies, | ||

| bool | load_lanes_p, | ||

| slp_tree | slp_node, | ||

| stmt_vector_for_cost * | prologue_cost_vec, | ||

| stmt_vector_for_cost * | body_cost_vec | ||

| ) |

Function vect_model_load_cost

Models cost for loads. In the case of grouped accesses, the last access has the overhead of the grouped access attributed to it. Since unaligned accesses are supported for loads, we also account for the costs of the access scheme chosen.

The SLP costs were already calculated during SLP tree build.

Grouped accesses?

Not a grouped access.

We assume that the cost of a single load-lanes instruction is equivalent to the cost of GROUP_SIZE separate loads. If a grouped access is instead being provided by a load-and-permute operation, include the cost of the permutes.

Uses an even and odd extract operations for each needed permute.

The loads themselves.

N scalar loads plus gathering them into a vector.

|

static |

Model cost for type demotion and promotion operations. PWR is normally zero for single-step promotions and demotions. It will be one if two-step promotion/demotion is required, and so on. Each additional step doubles the number of instructions required.

The SLP costs were already calculated during SLP tree build.

FORNOW: Assuming maximum 2 args per stmts.

References first_stmt(), GROUP_FIRST_ELEMENT, GROUP_SIZE, and STMT_VINFO_STMT.

| void vect_model_simple_cost | ( | stmt_vec_info | stmt_info, |

| int | ncopies, | ||

| enum vect_def_type * | dt, | ||

| stmt_vector_for_cost * | prologue_cost_vec, | ||

| stmt_vector_for_cost * | body_cost_vec | ||

| ) |

Function vect_model_simple_cost.

Models cost for simple operations, i.e. those that only emit ncopies of a single op. Right now, this does not account for multiple insns that could be generated for the single vector op. We will handle that shortly.

The SLP costs were already calculated during SLP tree build.

FORNOW: Assuming maximum 2 args per stmts.

Pass the inside-of-loop statements to the target-specific cost model.

References add_stmt_cost(), BB_VINFO_TARGET_COST_DATA, dump_enabled_p(), dump_printf_loc(), LOOP_VINFO_TARGET_COST_DATA, MSG_NOTE, PURE_SLP_STMT, STMT_VINFO_BB_VINFO, STMT_VINFO_LOOP_VINFO, STMT_VINFO_TYPE, type_promotion_vec_info_type, vec_promote_demote, vect_body, vect_constant_def, vect_external_def, vect_location, vect_pow2(), vect_prologue, and vector_stmt.

| void vect_model_store_cost | ( | stmt_vec_info | stmt_info, |

| int | ncopies, | ||

| bool | store_lanes_p, | ||

| enum vect_def_type | dt, | ||

| slp_tree | slp_node, | ||

| stmt_vector_for_cost * | prologue_cost_vec, | ||

| stmt_vector_for_cost * | body_cost_vec | ||

| ) |

Function vect_model_store_cost

Models cost for stores. In the case of grouped accesses, one access has the overhead of the grouped access attributed to it.

The SLP costs were already calculated during SLP tree build.

Grouped access?

Not a grouped access.

We assume that the cost of a single store-lanes instruction is equivalent to the cost of GROUP_SIZE separate stores. If a grouped access is instead being provided by a permute-and-store operation, include the cost of the permutes.

Uses a high and low interleave operation for each needed permute.

Costs of the stores.

References dump_enabled_p(), dump_printf_loc(), exact_log2(), MSG_NOTE, record_stmt_cost(), vec_perm, vect_body, and vect_location.

| void vect_remove_stores | ( | ) |

Remove a group of stores (for SLP or interleaving), free their stmt_vec_info.

Free the attached stmt_vec_info and remove the stmt.

|

static |

Function vect_stmt_relevant_p.

Return true if STMT in loop that is represented by LOOP_VINFO is "relevant for vectorization".

A stmt is considered "relevant for vectorization" if:

- it has uses outside the loop.

- it has vdefs (it alters memory).

- control stmts in the loop (except for the exit condition).

CHECKME: what other side effects would the vectorizer allow?

cond stmt other than loop exit cond.

changing memory.

uses outside the loop.

We expect all such uses to be in the loop exit phis

(because of loop closed form)

References dump_enabled_p(), dump_printf_loc(), MSG_NOTE, vect_location, and vect_used_in_scope.

| bool vect_supportable_shift | ( | ) |

Return TRUE if CODE (a shift operation) is supported for SCALAR_TYPE either as shift by a scalar or by a vector.

| bool vect_transform_stmt | ( | gimple | stmt, |

| gimple_stmt_iterator * | gsi, | ||

| bool * | grouped_store, | ||

| slp_tree | slp_node, | ||

| slp_instance | slp_node_instance | ||

| ) |

Function vect_transform_stmt.

Create a vectorized stmt to replace STMT, and insert it at BSI.

In case of interleaving, the whole chain is vectorized when the

last store in the chain is reached. Store stmts before the last

one are skipped, and there vec_stmt_info shouldn't be freed

meanwhile.

Handle inner-loop stmts whose DEF is used in the loop-nest that

is being vectorized, but outside the immediately enclosing loop.

Find the relevant loop-exit phi-node, and reord the vec_stmt there

(to be used when vectorizing outer-loop stmts that use the DEF of

STMT). Handle stmts whose DEF is used outside the loop-nest that is being vectorized.

|

static |

Function vectorizable_assignment.

Check if STMT performs an assignment (copy) that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at BSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Multiple types in SLP are handled by creating the appropriate number of vectorized stmts for each SLP node. Hence, NCOPIES is always 1 in case of SLP.

Is vectorizable assignment?

We can handle NOP_EXPR conversions that do not change the number of elements or the vector size.

We do not handle bit-precision changes.

But a conversion that does not change the bit-pattern is ok.

Transform.

Handle def.

Handle use.

Handle uses.

Arguments are ready. create the new vector stmt.

Referenced by vect_analyze_stmt().

|

static |

Function vectorizable_call.

Check if STMT performs a function call that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at BSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Is STMT a vectorizable call?

Process function arguments.

Bail out if the function has more than three arguments, we do not have interesting builtin functions to vectorize with more than two arguments except for fma. No arguments is also not good.

Ignore the argument of IFN_GOMP_SIMD_LANE, it is magic.

We can only handle calls with arguments of the same type.

If all arguments are external or constant defs use a vector type with the same size as the output vector type.

FORNOW

For now, we only vectorize functions if a target specific builtin is available. TODO – in some cases, it might be profitable to insert the calls for pieces of the vector, in order to be able to vectorize other operations in the loop.

We can handle IFN_GOMP_SIMD_LANE by returning a

{ 0, 1, 2, ... vf - 1 } vector. Sanity check: make sure that at least one copy of the vectorized stmt needs to be generated.

Transform.

Handle def.

Build argument list for the vectorized call.

Arguments are ready. Create the new vector stmt.

Build argument list for the vectorized call.

Arguments are ready. Create the new vector stmt.

No current target implements this case.

Update the exception handling table with the vector stmt if necessary.

The call in STMT might prevent it from being removed in dce. We however cannot remove it here, due to the way the ssa name it defines is mapped to the new definition. So just replace rhs of the statement with something harmless.

Referenced by vect_analyze_stmt().

| bool vectorizable_condition | ( | gimple | stmt, |

| gimple_stmt_iterator * | gsi, | ||

| gimple * | vec_stmt, | ||

| tree | reduc_def, | ||

| int | reduc_index, | ||

| slp_tree | slp_node | ||

| ) |

vectorizable_condition.

Check if STMT is conditional modify expression that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt using VEC_COND_EXPR to replace it, put it in VEC_STMT, and insert it at GSI.

When STMT is vectorized as nested cycle, REDUC_DEF is the vector variable to be used at REDUC_INDEX (in then clause if REDUC_INDEX is 1, and in else caluse if it is 2).

Return FALSE if not a vectorizable STMT, TRUE otherwise.

FORNOW: not yet supported.

Is vectorizable conditional operation?

The result of a vector comparison should be signed type.

Transform.

Handle def.

Handle cond expr.

Arguments are ready. Create the new vector stmt.

Referenced by vect_analyze_stmt().

|

static |

Check if STMT performs a conversion operation, that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at GSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Is STMT a vectorizable conversion?

Check types of lhs and rhs.

Check the operands of the operation.

For WIDEN_MULT_EXPR, if OP0 is a constant, use the type of

OP1. If op0 is an external or constant defs use a vector type of the same size as the output vector type.

Multiple types in SLP are handled by creating the appropriate number of vectorized stmts for each SLP node. Hence, NCOPIES is always 1 in case of SLP.

Sanity check: make sure that at least one copy of the vectorized stmt needs to be generated.

Supportable by target?

FALLTHRU

Binary widening operation can only be supported directly by the

architecture. Transform.

In case of multi-step conversion, we first generate conversion operations to the intermediate types, and then from that types to the final one. We create vector destinations for the intermediate type (TYPES) received from supportable_*_operation, and store them in the correct order for future use in vect_create_vectorized_*_stmts ().

Arguments are ready, create the new vector stmt.

In case the vectorization factor (VF) is bigger than the number

of elements that we can fit in a vectype (nunits), we have to

generate more than one vector stmt - i.e - we need to "unroll"

the vector stmt by a factor VF/nunits. Handle uses.

Store vec_oprnd1 for every vector stmt to be created

for SLP_NODE. We check during the analysis that all

the shift arguments are the same. Arguments are ready. Create the new vector stmts.

In case the vectorization factor (VF) is bigger than the number

of elements that we can fit in a vectype (nunits), we have to

generate more than one vector stmt - i.e - we need to "unroll"

the vector stmt by a factor VF/nunits. Handle uses.

Arguments are ready. Create the new vector stmts.

Referenced by vect_analyze_stmt().

| tree vectorizable_function | ( | ) |

Checks if CALL can be vectorized in type VECTYPE. Returns a function declaration if the target has a vectorized version of the function, or NULL_TREE if the function cannot be vectorized.

We only handle functions that do not read or clobber memory – i.e. const or novops ones.

|

static |

vectorizable_load.

Check if STMT reads a non scalar data-ref (array/pointer/structure) that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at BSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Multiple types in SLP are handled by creating the appropriate number of vectorized stmts for each SLP node. Hence, NCOPIES is always 1 in case of SLP.

FORNOW. This restriction should be relaxed.

Is vectorizable load?

FORNOW. In some cases can vectorize even if data-type not supported (e.g. - data copies).

Check if the load is a part of an interleaving chain.

FORNOW

Transform.

Currently we support only unconditional gather loads,

so mask should be all ones. For a load with loop-invariant (but other than power-of-2)

stride (i.e. not a grouped access) like so:

for (i = 0; i < n; i += stride)

... = array[i];

we generate a new induction variable and new accesses to

form a new vector (or vectors, depending on ncopies):

for (j = 0; ; j += VF*stride)

tmp1 = array[j];

tmp2 = array[j + stride];

...

vectemp = {tmp1, tmp2, ...}Check if the chain of loads is already vectorized.

For SLP we would need to copy over SLP_TREE_VEC_STMTS.

??? But we can only do so if there is exactly one

as we have no way to get at the rest. Leave the CSE

opportunity alone.

??? With the group load eventually participating

in multiple different permutations (having multiple

slp nodes which refer to the same group) the CSE

is even wrong code. See PR56270. VEC_NUM is the number of vect stmts to be created for this group.

Targets with load-lane instructions must not require explicit realignment.

In case the vectorization factor (VF) is bigger than the number

of elements that we can fit in a vectype (nunits), we have to generate

more than one vector stmt - i.e - we need to "unroll" the

vector stmt by a factor VF/nunits. In doing so, we record a pointer

from one copy of the vector stmt to the next, in the field

STMT_VINFO_RELATED_STMT. This is necessary in order to allow following

stages to find the correct vector defs to be used when vectorizing

stmts that use the defs of the current stmt. The example below

illustrates the vectorization process when VF=16 and nunits=4 (i.e., we

need to create 4 vectorized stmts):

before vectorization:

RELATED_STMT VEC_STMT

S1: x = memref - -

S2: z = x + 1 - -

step 1: vectorize stmt S1:

We first create the vector stmt VS1_0, and, as usual, record a

pointer to it in the STMT_VINFO_VEC_STMT of the scalar stmt S1.

Next, we create the vector stmt VS1_1, and record a pointer to

it in the STMT_VINFO_RELATED_STMT of the vector stmt VS1_0.

Similarly, for VS1_2 and VS1_3. This is the resulting chain of

stmts and pointers:

RELATED_STMT VEC_STMT

VS1_0: vx0 = memref0 VS1_1 -

VS1_1: vx1 = memref1 VS1_2 -

VS1_2: vx2 = memref2 VS1_3 -

VS1_3: vx3 = memref3 - -

S1: x = load - VS1_0

S2: z = x + 1 - -

See in documentation in vect_get_vec_def_for_stmt_copy for how the

information we recorded in RELATED_STMT field is used to vectorize

stmt S2. In case of interleaving (non-unit grouped access):

S1: x2 = &base + 2

S2: x0 = &base

S3: x1 = &base + 1

S4: x3 = &base + 3

Vectorized loads are created in the order of memory accesses

starting from the access of the first stmt of the chain:

VS1: vx0 = &base

VS2: vx1 = &base + vec_size*1

VS3: vx3 = &base + vec_size*2

VS4: vx4 = &base + vec_size*3

Then permutation statements are generated:

VS5: vx5 = VEC_PERM_EXPR < vx0, vx1, { 0, 2, ..., i*2 } >

VS6: vx6 = VEC_PERM_EXPR < vx0, vx1, { 1, 3, ..., i*2+1 } >

...

And they are put in STMT_VINFO_VEC_STMT of the corresponding scalar stmts

(the order of the data-refs in the output of vect_permute_load_chain

corresponds to the order of scalar stmts in the interleaving chain - see

the documentation of vect_permute_load_chain()).

The generation of permutation stmts and recording them in

STMT_VINFO_VEC_STMT is done in vect_transform_grouped_load().

In case of both multiple types and interleaving, the vector loads and

permutation stmts above are created for every copy. The result vector

stmts are put in STMT_VINFO_VEC_STMT for the first copy and in the

corresponding STMT_VINFO_RELATED_STMT for the next copies. If the data reference is aligned (dr_aligned) or potentially unaligned

on a target that supports unaligned accesses (dr_unaligned_supported)

we generate the following code:

p = initial_addr;

indx = 0;

loop {

p = p + indx * vectype_size;

vec_dest = *(p);

indx = indx + 1;

}

Otherwise, the data reference is potentially unaligned on a target that

does not support unaligned accesses (dr_explicit_realign_optimized) -

then generate the following code, in which the data in each iteration is

obtained by two vector loads, one from the previous iteration, and one

from the current iteration:

p1 = initial_addr;

msq_init = *(floor(p1))

p2 = initial_addr + VS - 1;

realignment_token = call target_builtin;

indx = 0;

loop {

p2 = p2 + indx * vectype_size

lsq = *(floor(p2))

vec_dest = realign_load (msq, lsq, realignment_token)

indx = indx + 1;

msq = lsq;

} If the misalignment remains the same throughout the execution of the loop, we can create the init_addr and permutation mask at the loop preheader. Otherwise, it needs to be created inside the loop. This can only occur when vectorizing memory accesses in the inner-loop nested within an outer-loop that is being vectorized.

1. Create the vector or array pointer update chain.

Emit:

VEC_ARRAY = LOAD_LANES (MEM_REF[...all elements...]). Extract each vector into an SSA_NAME.

Record the mapping between SSA_NAMEs and statements.

2. Create the vector-load in the loop.

3. Handle explicit realignment if necessary/supported.

Create in loop:

vec_dest = realign_load (msq, lsq, realignment_token) 4. Handle invariant-load.

Collect vector loads and later create their permutation in

vect_transform_grouped_load (). Store vector loads in the corresponding SLP_NODE.

Bump the vector pointer to account for a gap.

Referenced by vect_analyze_stmt().

|

static |

Function vectorizable_operation.

Check if STMT performs a binary, unary or ternary operation that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at BSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Is STMT a vectorizable binary/unary operation?

For pointer addition, we should use the normal plus for the vector addition.

Support only unary or binary operations.

Most operations cannot handle bit-precision types without extra truncations.

Exception are bitwise binary operations.

If op0 is an external or constant def use a vector type with the same size as the output vector type.

Multiple types in SLP are handled by creating the appropriate number of vectorized stmts for each SLP node. Hence, NCOPIES is always 1 in case of SLP.

Shifts are handled in vectorizable_shift ().

Supportable by target?

Check only during analysis.

Worthwhile without SIMD support? Check only during analysis.

Transform.

Handle def.

In case the vectorization factor (VF) is bigger than the number

of elements that we can fit in a vectype (nunits), we have to generate

more than one vector stmt - i.e - we need to "unroll" the

vector stmt by a factor VF/nunits. In doing so, we record a pointer

from one copy of the vector stmt to the next, in the field

STMT_VINFO_RELATED_STMT. This is necessary in order to allow following

stages to find the correct vector defs to be used when vectorizing

stmts that use the defs of the current stmt. The example below

illustrates the vectorization process when VF=16 and nunits=4 (i.e.,

we need to create 4 vectorized stmts):

before vectorization:

RELATED_STMT VEC_STMT

S1: x = memref - -

S2: z = x + 1 - -

step 1: vectorize stmt S1 (done in vectorizable_load. See more details

there):

RELATED_STMT VEC_STMT

VS1_0: vx0 = memref0 VS1_1 -

VS1_1: vx1 = memref1 VS1_2 -

VS1_2: vx2 = memref2 VS1_3 -

VS1_3: vx3 = memref3 - -

S1: x = load - VS1_0

S2: z = x + 1 - -

step2: vectorize stmt S2 (done here):

To vectorize stmt S2 we first need to find the relevant vector

def for the first operand 'x'. This is, as usual, obtained from

the vector stmt recorded in the STMT_VINFO_VEC_STMT of the stmt

that defines 'x' (S1). This way we find the stmt VS1_0, and the

relevant vector def 'vx0'. Having found 'vx0' we can generate

the vector stmt VS2_0, and as usual, record it in the

STMT_VINFO_VEC_STMT of stmt S2.

When creating the second copy (VS2_1), we obtain the relevant vector

def from the vector stmt recorded in the STMT_VINFO_RELATED_STMT of

stmt VS1_0. This way we find the stmt VS1_1 and the relevant

vector def 'vx1'. Using 'vx1' we create stmt VS2_1 and record a

pointer to it in the STMT_VINFO_RELATED_STMT of the vector stmt VS2_0.

Similarly when creating stmts VS2_2 and VS2_3. This is the resulting

chain of stmts and pointers:

RELATED_STMT VEC_STMT

VS1_0: vx0 = memref0 VS1_1 -

VS1_1: vx1 = memref1 VS1_2 -

VS1_2: vx2 = memref2 VS1_3 -

VS1_3: vx3 = memref3 - -

S1: x = load - VS1_0

VS2_0: vz0 = vx0 + v1 VS2_1 -

VS2_1: vz1 = vx1 + v1 VS2_2 -

VS2_2: vz2 = vx2 + v1 VS2_3 -

VS2_3: vz3 = vx3 + v1 - -

S2: z = x + 1 - VS2_0 Handle uses.

Arguments are ready. Create the new vector stmt.

Referenced by vect_analyze_stmt().

|

static |

Function vectorizable_shift.

Check if STMT performs a shift operation that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at BSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Is STMT a vectorizable binary/unary operation?

If op0 is an external or constant def use a vector type with the same size as the output vector type.

Multiple types in SLP are handled by creating the appropriate number of vectorized stmts for each SLP node. Hence, NCOPIES is always 1 in case of SLP.

Determine whether the shift amount is a vector, or scalar. If the shift/rotate amount is a vector, use the vector/vector shift optabs.

In SLP, need to check whether the shift count is the same,

in loops if it is a constant or invariant, it is always

a scalar shift. Vector shifted by vector.

See if the machine has a vector shifted by scalar insn and if not then see if it has a vector shifted by vector insn.

Unlike the other binary operators, shifts/rotates have

the rhs being int, instead of the same type as the lhs,

so make sure the scalar is the right type if we are

dealing with vectors of long long/long/short/char. Supportable by target?

Check only during analysis.

Worthwhile without SIMD support? Check only during analysis.

Transform.

Handle def.

Handle uses.

Vector shl and shr insn patterns can be defined with scalar

operand 2 (shift operand). In this case, use constant or loop

invariant op1 directly, without extending it to vector mode

first. Store vec_oprnd1 for every vector stmt to be created

for SLP_NODE. We check during the analysis that all

the shift arguments are the same.

TODO: Allow different constants for different vector

stmts generated for an SLP instance. vec_oprnd1 is available if operand 1 should be of a scalar-type

(a special case for certain kind of vector shifts); otherwise,

operand 1 should be of a vector type (the usual case). Arguments are ready. Create the new vector stmt.

Referenced by vect_analyze_stmt().

|

static |

Function vectorizable_store.

Check if STMT defines a non scalar data-ref (array/pointer/structure) that can be vectorized. If VEC_STMT is also passed, vectorize the STMT: create a vectorized stmt to replace it, put it in VEC_STMT, and insert it at BSI. Return FALSE if not a vectorizable STMT, TRUE otherwise.

Multiple types in SLP are handled by creating the appropriate number of vectorized stmts for each SLP node. Hence, NCOPIES is always 1 in case of SLP.

FORNOW. This restriction should be relaxed.

Is vectorizable store?

FORNOW. In some cases can vectorize even if data-type not supported (e.g. - array initialization with 0).

STMT is the leader of the group. Check the operands of all the

stmts of the group. Transform.

FORNOW

We vectorize all the stmts of the interleaving group when we

reach the last stmt in the group. VEC_NUM is the number of vect stmts to be created for this

group. VEC_NUM is the number of vect stmts to be created for this

group. Targets with store-lane instructions must not require explicit realignment.

In case the vectorization factor (VF) is bigger than the number of elements that we can fit in a vectype (nunits), we have to generate more than one vector stmt - i.e - we need to "unroll" the vector stmt by a factor VF/nunits. For more details see documentation in vect_get_vec_def_for_copy_stmt.

In case of interleaving (non-unit grouped access):

S1: &base + 2 = x2

S2: &base = x0

S3: &base + 1 = x1

S4: &base + 3 = x3

We create vectorized stores starting from base address (the access of the

first stmt in the chain (S2 in the above example), when the last store stmt

of the chain (S4) is reached:

VS1: &base = vx2

VS2: &base + vec_size*1 = vx0

VS3: &base + vec_size*2 = vx1

VS4: &base + vec_size*3 = vx3

Then permutation statements are generated:

VS5: vx5 = VEC_PERM_EXPR < vx0, vx3, {0, 8, 1, 9, 2, 10, 3, 11} >

VS6: vx6 = VEC_PERM_EXPR < vx0, vx3, {4, 12, 5, 13, 6, 14, 7, 15} >

...

And they are put in STMT_VINFO_VEC_STMT of the corresponding scalar stmts

(the order of the data-refs in the output of vect_permute_store_chain

corresponds to the order of scalar stmts in the interleaving chain - see

the documentation of vect_permute_store_chain()).

In case of both multiple types and interleaving, above vector stores and

permutation stmts are created for every copy. The result vector stmts are

put in STMT_VINFO_VEC_STMT for the first copy and in the corresponding

STMT_VINFO_RELATED_STMT for the next copies.

Get vectorized arguments for SLP_NODE.

For interleaved stores we collect vectorized defs for all the

stores in the group in DR_CHAIN and OPRNDS. DR_CHAIN is then

used as an input to vect_permute_store_chain(), and OPRNDS as

an input to vect_get_vec_def_for_stmt_copy() for the next copy.

If the store is not grouped, GROUP_SIZE is 1, and DR_CHAIN and

OPRNDS are of size 1. Since gaps are not supported for interleaved stores,

GROUP_SIZE is the exact number of stmts in the chain.

Therefore, NEXT_STMT can't be NULL_TREE. In case that

there is no interleaving, GROUP_SIZE is 1, and only one

iteration of the loop will be executed. We should have catched mismatched types earlier.

For interleaved stores we created vectorized defs for all the

defs stored in OPRNDS in the previous iteration (previous copy).

DR_CHAIN is then used as an input to vect_permute_store_chain(),

and OPRNDS as an input to vect_get_vec_def_for_stmt_copy() for the

next copy.

If the store is not grouped, GROUP_SIZE is 1, and DR_CHAIN and

OPRNDS are of size 1. Combine all the vectors into an array.

Emit:

MEM_REF[...all elements...] = STORE_LANES (VEC_ARRAY). Permute.

Bump the vector pointer.

For grouped stores vectorized defs are interleaved in

vect_permute_store_chain(). Arguments are ready. Create the new vector stmt.

Referenced by vect_analyze_stmt().

|

static |

ARRAY is an array of vectors created by create_vector_array. Emit code to store SSA_NAME VECT in index N of the array. The store is part of the vectorization of STMT.

Variable Documentation

| unsigned int current_vector_size |