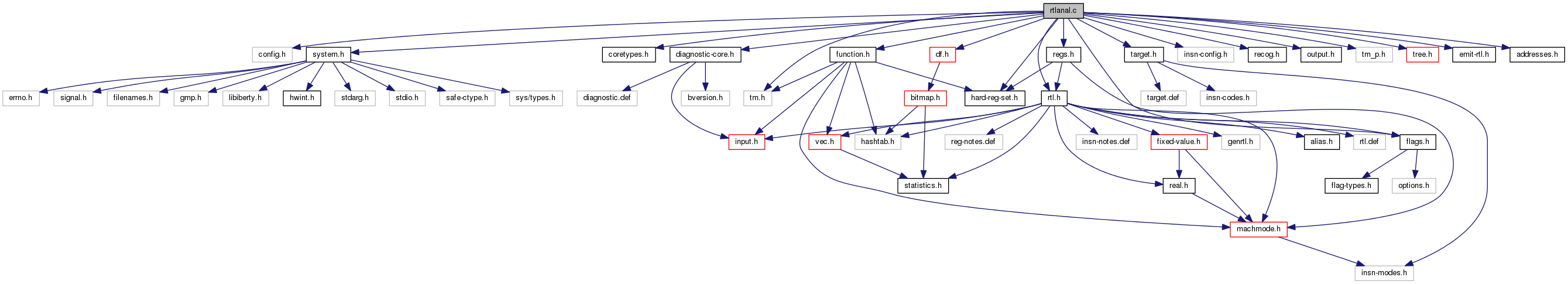

#include "config.h"#include "system.h"#include "coretypes.h"#include "tm.h"#include "diagnostic-core.h"#include "hard-reg-set.h"#include "rtl.h"#include "insn-config.h"#include "recog.h"#include "target.h"#include "output.h"#include "tm_p.h"#include "flags.h"#include "regs.h"#include "function.h"#include "df.h"#include "tree.h"#include "emit-rtl.h"#include "addresses.h"

Data Structures | |

| struct | set_of_data |

| struct | for_each_inc_dec_ops |

| struct | parms_set_data |

Macros | |

| #define | cached_num_sign_bit_copies sorry_i_am_preventing_exponential_behavior |

Variables | |

| static int | non_rtx_starting_operands [NUM_RTX_CODE] |

| static unsigned int | num_sign_bit_copies_in_rep [MAX_MODE_INT+1][MAX_MODE_INT+1] |

Macro Definition Documentation

| #define cached_num_sign_bit_copies sorry_i_am_preventing_exponential_behavior |

We let num_sign_bit_copies recur into nonzero_bits as that is useful. We don't let nonzero_bits recur into num_sign_bit_copies, because that is less useful. We can't allow both, because that results in exponential run time recursion. There is a nullstone testcase that triggered this. This macro avoids accidental uses of num_sign_bit_copies.

Function Documentation

| void add_int_reg_note | ( | ) |

| void add_reg_note | ( | ) |

Add register note with kind KIND and datum DATUM to INSN.

| void add_shallow_copy_of_reg_note | ( | ) |

Add a register note like NOTE to INSN.

| int address_cost | ( | ) |

Return cost of address expression X. Expect that X is properly formed address reference.

SPEED parameter specify whether costs optimized for speed or size should be returned.

We may be asked for cost of various unusual addresses, such as operands of push instruction. It is not worthwhile to complicate writing of the target hook by such cases.

| rtx alloc_reg_note | ( | ) |

Allocate a register note with kind KIND and datum DATUM. LIST is stored as the pointer to the next register note.

These types of register notes use an INSN_LIST rather than an EXPR_LIST, so that copying is done right and dumps look better.

References DF_REF_INSN, find_reg_equal_equiv_note(), gcc_assert, and remove_note().

| int auto_inc_p | ( | ) |

Return 1 if X is an autoincrement side effect and the register is not the stack pointer.

There are no REG_INC notes for SP.

|

static |

Evaluate the likelihood of X being a base or index value, returning positive if it is likely to be a base, negative if it is likely to be an index, and 0 if we can't tell. Make the magnitude of the return value reflect the amount of confidence we have in the answer.

MODE, AS, OUTER_CODE and INDEX_CODE are as for ok_for_base_p_1.

Believe *_POINTER unless the address shape requires otherwise.

X is a hard register. If it only fits one of the base

or index classes, choose that interpretation.

|

static |

Return true if CODE applies some kind of scale. The scaled value is is the first operand and the scale is the second.

Needed by ARM targets.

Referenced by init_num_sign_bit_copies_in_rep().

|

static |

The function cached_nonzero_bits is a wrapper around nonzero_bits1. It avoids exponential behavior in nonzero_bits1 when X has identical subexpressions on the first or the second level.

Try to find identical subexpressions. If found call nonzero_bits1 on X with the subexpressions as KNOWN_X and the precomputed value for the subexpression as KNOWN_RET.

Check the first level.

Check the second level.

|

static |

See the macro definition above. The function cached_num_sign_bit_copies is a wrapper around num_sign_bit_copies1. It avoids exponential behavior in num_sign_bit_copies1 when X has identical subexpressions on the first or the second level.

Try to find identical subexpressions. If found call num_sign_bit_copies1 on X with the subexpressions as KNOWN_X and the precomputed value for the subexpression as KNOWN_RET.

Check the first level.

Check the second level.

References floor_log2(), nonzero_bits(), and XEXP.

| rtx canonicalize_condition | ( | rtx | insn, |

| rtx | cond, | ||

| int | reverse, | ||

| rtx * | earliest, | ||

| rtx | want_reg, | ||

| int | allow_cc_mode, | ||

| int | valid_at_insn_p | ||

| ) |

Given an insn INSN and condition COND, return the condition in a canonical form to simplify testing by callers. Specifically:

(1) The code will always be a comparison operation (EQ, NE, GT, etc.). (2) Both operands will be machine operands; (cc0) will have been replaced. (3) If an operand is a constant, it will be the second operand. (4) (LE x const) will be replaced with (LT x <const+1>) and similarly for GE, GEU, and LEU.

If the condition cannot be understood, or is an inequality floating-point comparison which needs to be reversed, 0 will be returned.

If REVERSE is nonzero, then reverse the condition prior to canonizing it.

If EARLIEST is nonzero, it is a pointer to a place where the earliest insn used in locating the condition was found. If a replacement test of the condition is desired, it should be placed in front of that insn and we will be sure that the inputs are still valid.

If WANT_REG is nonzero, we wish the condition to be relative to that register, if possible. Therefore, do not canonicalize the condition further. If ALLOW_CC_MODE is nonzero, allow the condition returned to be a compare to a CC mode register.

If VALID_AT_INSN_P, the condition must be valid at both *EARLIEST and at INSN.

If we are comparing a register with zero, see if the register is set in the previous insn to a COMPARE or a comparison operation. Perform the same tests as a function of STORE_FLAG_VALUE as find_comparison_args in cse.c

Set nonzero when we find something of interest.

If this is a COMPARE, pick up the two things being compared.

Go back to the previous insn. Stop if it is not an INSN. We also

stop if it isn't a single set or if it has a REG_INC note because

we don't want to bother dealing with it. In cfglayout mode, there do not have to be labels at the

beginning of a block, or jumps at the end, so the previous

conditions would not stop us when we reach bb boundary. If this is setting OP0, get what it sets it to if it looks

relevant. ??? We may not combine comparisons done in a CCmode with

comparisons not done in a CCmode. This is to aid targets

like Alpha that have an IEEE compliant EQ instruction, and

a non-IEEE compliant BEQ instruction. The use of CCmode is

actually artificial, simply to prevent the combination, but

should not affect other platforms.

However, we must allow VOIDmode comparisons to match either

CCmode or non-CCmode comparison, because some ports have

modeless comparisons inside branch patterns.

??? This mode check should perhaps look more like the mode check

in simplify_comparison in combine. If this sets OP0, but not directly, we have to give up.

If the caller is expecting the condition to be valid at INSN,

make sure X doesn't change before INSN. If constant is first, put it last.

If OP0 is the result of a comparison, we weren't able to find what was really being compared, so fail.

Canonicalize any ordered comparison with integers involving equality if we can do computations in the relevant mode and we do not overflow.

When cross-compiling, const_val might be sign-extended from

BITS_PER_WORD to HOST_BITS_PER_WIDE_INT Never return CC0; return zero instead.

| int commutative_operand_precedence | ( | ) |

Return a value indicating whether OP, an operand of a commutative operation, is preferred as the first or second operand. The higher the value, the stronger the preference for being the first operand. We use negative values to indicate a preference for the first operand and positive values for the second operand.

Constants always come the second operand. Prefer "nice" constants.

SUBREGs of objects should come second.

Complex expressions should be the first, so decrease priority

of objects. Prefer pointer objects over non pointer objects. Prefer operands that are themselves commutative to be first.

This helps to make things linear. In particular,

(and (and (reg) (reg)) (not (reg))) is canonical. If only one operand is a binary expression, it will be the first

operand. In particular, (plus (minus (reg) (reg)) (neg (reg)))

is canonical, although it will usually be further simplified. Then prefer NEG and NOT.

| int computed_jump_p | ( | ) |

Return nonzero if INSN is an indirect jump (aka computed jump).

Tablejumps and casesi insns are not considered indirect jumps; we can recognize them by a (use (label_ref)).

If we have a JUMP_LABEL set, we're not a computed jump.

|

static |

Referenced by replace_label().

|

static |

A subroutine of computed_jump_p, return 1 if X contains a REG or MEM or constant that is not in the constant pool and not in the condition of an IF_THEN_ELSE.

| bool constant_pool_constant_p | ( | ) |

Check whether this is a constant pool constant.

| int count_occurrences | ( | ) |

Return the number of places FIND appears within X. If COUNT_DEST is zero, we do not count occurrences inside the destination of a SET.

|

static |

Return TRUE iff DEST is a register or subreg of a register and doesn't change the number of words of the inner register, and any part of the register is TEST_REGNO.

References gcc_checking_assert, INSN_P, REG_NOTE_KIND, REG_NOTES, and XEXP.

|

static |

Like covers_regno_no_parallel_p, but also handles PARALLELs where any member matches the covers_regno_no_parallel_p criteria.

Some targets place small structures in registers for return values of functions, and those registers are wrapped in PARALLELs that we may see as the destination of a SET.

References END_REGNO, INSN_P, REG_NOTE_KIND, REG_NOTES, REG_P, REGNO, and XEXP.

| int dead_or_set_p | ( | ) |

Return nonzero if X's old contents don't survive after INSN. This will be true if X is (cc0) or if X is a register and X dies in INSN or because INSN entirely sets X.

"Entirely set" means set directly and not through a SUBREG, or ZERO_EXTRACT, so no trace of the old contents remains. Likewise, REG_INC does not count.

REG may be a hard or pseudo reg. Renumbering is not taken into account, but for this use that makes no difference, since regs don't overlap during their lifetimes. Therefore, this function may be used at any time after deaths have been computed.

If REG is a hard reg that occupies multiple machine registers, this function will only return 1 if each of those registers will be replaced by INSN.

Can't use cc0_rtx below since this file is used by genattrtab.c.

| int dead_or_set_regno_p | ( | ) |

Utility function for dead_or_set_p to check an individual register.

See if there is a death note for something that includes TEST_REGNO.

If a COND_EXEC is not executed, the value survives.

References GET_CODE, INSN_P, multiple_sets(), NULL, PATTERN, REG_NOTE_KIND, REG_NOTES, and XEXP.

| void decompose_address | ( | struct address_info * | info, |

| rtx * | loc, | ||

| enum machine_mode | mode, | ||

| addr_space_t | as, | ||

| enum rtx_code | outer_code | ||

| ) |

Describe address *LOC in *INFO. MODE is the mode of the addressed value, or VOIDmode if not known. AS is the address space associated with LOC. OUTER_CODE is MEM if *LOC is a MEM address and ADDRESS otherwise.

Referenced by lsb_bitfield_op_p().

|

static |

INFO->INNER describes a {PRE,POST}_MODIFY address. Set up the rest of INFO accordingly.

|

static |

INFO->INNER describes a {PRE,POST}_{INC,DEC} address. Set up the rest of INFO accordingly.

These addresses are only valid when the size of the addressed value is known.

| void decompose_lea_address | ( | ) |

Describe address operand LOC in INFO.

| void decompose_mem_address | ( | ) |

Describe the address of MEM X in INFO.

|

static |

INFO->INNER describes a normal, non-automodified address. Fill in the rest of INFO accordingly.

Treat the address as the sum of up to four values.

If there is more than one component, any base component is in a PLUS.

Try to classify each sum operand now. Leave those that could be either a base or an index in OPS.

The only other possibilities are a base or an index.

Classify the remaining OPS members as bases and indexes.

If we haven't seen a base or an index yet, assume that this is

the base. If we were confident that another term was the base

or index, treat the remaining operand as the other kind. In the event of a tie, assume the base comes first.

| int default_address_cost | ( | ) |

If the target doesn't override, compute the cost as with arithmetic.

|

static |

Treat *LOC as a tree of PLUS operands and store pointers to the summed values in [PTR, END). Return a pointer to the end of the used array.

| void find_all_hard_reg_sets | ( | ) |

Examine INSN, and compute the set of hard registers written by it. Store it in *PSET. Should only be called after reload.

References find_reg_note(), NULL, SET_DEST, and side_effects_p().

| rtx find_constant_src | ( | ) |

Check whether INSN is a single_set whose source is known to be equivalent to a constant. Return that constant if so, otherwise return null.

References alloc_EXPR_LIST(), alloc_INSN_LIST(), gcc_checking_assert, int_reg_note_p(), and PUT_REG_NOTE_KIND.

| rtx find_first_parameter_load | ( | ) |

Look backward for first parameter to be loaded. Note that loads of all parameters will not necessarily be found if CSE has eliminated some of them (e.g., an argument to the outer function is passed down as a parameter). Do not skip BOUNDARY.

Since different machines initialize their parameter registers in different orders, assume nothing. Collect the set of all parameter registers.

We only care about registers which can hold function

arguments. Search backward for the first set of a register in this set.

It is possible that some loads got CSEed from one call to

another. Stop in that case. Our caller needs either ensure that we will find all sets

(in case code has not been optimized yet), or take care

for possible labels in a way by setting boundary to preceding

CODE_LABEL. If we found something that did not set a parameter reg,

we're done. Do not keep going, as that might result

in hoisting an insn before the setting of a pseudo

that is used by the hoisted insn.

References rtx_cost(), full_rtx_costs::size, and full_rtx_costs::speed.

| rtx find_last_value | ( | ) |

Return the last thing that X was assigned from before *PINSN. If VALID_TO is not NULL_RTX then verify that the object is not modified up to VALID_TO. If the object was modified, if we hit a partial assignment to X, or hit a CODE_LABEL first, return X. If we found an assignment, update *PINSN to point to it. ALLOW_HWREG is set to 1 if hardware registers are allowed to be the src.

Reject hard registers because we don't usually want

to use them; we'd rather use a pseudo.

If set in non-simple way, we don't have a value.

| rtx find_reg_equal_equiv_note | ( | ) |

Return a REG_EQUIV or REG_EQUAL note if insn has only a single set and has such a note.

FIXME: We should never have REG_EQUAL/REG_EQUIV notes on insns that have multiple sets. Checking single_set to make sure of this is not the proper check, as explained in the comment in set_unique_reg_note.

This should be changed into an assert.

References END_HARD_REGNO, GET_CODE, REG_P, REGNO, and XEXP.

| int find_reg_fusage | ( | ) |

Return true if DATUM, or any overlap of DATUM, of kind CODE is found in the CALL_INSN_FUNCTION_USAGE information of INSN.

If it's not a CALL_INSN, it can't possibly have a CALL_INSN_FUNCTION_USAGE field, so don't bother checking.

CALL_INSN_FUNCTION_USAGE information cannot contain references

to pseudo registers, so don't bother checking.

| rtx find_reg_note | ( | ) |

Return the reg-note of kind KIND in insn INSN, if there is one. If DATUM is nonzero, look for one whose datum is DATUM.

Ignore anything that is not an INSN, JUMP_INSN or CALL_INSN.

References CALL_INSN_FUNCTION_USAGE, CALL_P, gcc_assert, GET_CODE, REG_P, REGNO, rtx_equal_p(), and XEXP.

| int find_regno_fusage | ( | ) |

Return true if REGNO, or any overlap of REGNO, of kind CODE is found in the CALL_INSN_FUNCTION_USAGE information of INSN.

CALL_INSN_FUNCTION_USAGE information cannot contain references to pseudo registers, so don't bother checking.

| rtx find_regno_note | ( | ) |

Return the reg-note of kind KIND in insn INSN which applies to register number REGNO, if any. Return 0 if there is no such reg-note. Note that the REGNO of this NOTE need not be REGNO if REGNO is a hard register; it might be the case that the note overlaps REGNO.

Ignore anything that is not an INSN, JUMP_INSN or CALL_INSN.

Verify that it is a register, so that scratch and MEM won't cause a

problem here.

References END_HARD_REGNO, and find_regno_fusage().

| int for_each_inc_dec | ( | rtx * | x, |

| for_each_inc_dec_fn | fn, | ||

| void * | arg | ||

| ) |

Traverse *X looking for MEMs, and for autoinc operations within them. For each such autoinc operation found, call FN, passing it the innermost enclosing MEM, the operation itself, the RTX modified by the operation, two RTXs (the second may be NULL) that, once added, represent the value to be held by the modified RTX afterwards, and ARG. FN is to return -1 to skip looking for other autoinc operations within the visited operation, 0 to continue the traversal, or any other value to have it returned to the caller of for_each_inc_dec.

|

static |

Find PRE/POST-INC/DEC/MODIFY operations within *R, extract the operands of the equivalent add insn and pass the result to the operator specified by *D.

|

static |

|

static |

If *R is a MEM, find PRE/POST-INC/DEC/MODIFY operations within its address, extract the operands of the equivalent add insn and pass the result to the operator specified by *D.

| int for_each_rtx | ( | ) |

Traverse X via depth-first search, calling F for each sub-expression (including X itself). F is also passed the DATA. If F returns -1, do not traverse sub-expressions, but continue traversing the rest of the tree. If F ever returns any other nonzero value, stop the traversal, and return the value returned by F. Otherwise, return 0. This function does not traverse inside tree structure that contains RTX_EXPRs, or into sub-expressions whose format code is `0' since it is not known whether or not those codes are actually RTL.

This routine is very general, and could (should?) be used to implement many of the other routines in this file.

Call F on X.

Do not traverse sub-expressions.

Stop the traversal.

There are no sub-expressions.

References avoid_constant_pool_reference(), GET_CODE, GET_RTX_CLASS, OBJECT_P, RTX_CONST_OBJ, RTX_EXTRA, and SUBREG_REG.

|

static |

Optimized loop of for_each_rtx, trying to avoid useless recursive calls. Processes the subexpressions of EXP and passes them to F.

Call F on X.

Do not traverse sub-expressions.

Stop the traversal.

There are no sub-expressions.

Call F on X.

Do not traverse sub-expressions.

Stop the traversal.

There are no sub-expressions.

Nothing to do.

Referenced by tablejump_p().

| enum machine_mode get_address_mode | ( | ) |

Return the mode of MEM's address.

|

static |

If *INNER can be interpreted as a base, return a pointer to the inner term (see address_info). Return null otherwise.

| rtx get_call_rtx_from | ( | ) |

Return the CALL in X if there is one.

References CONST_INT_P, GET_CODE, and XEXP.

| rtx get_condition | ( | ) |

Given a jump insn JUMP, return the condition that will cause it to branch to its JUMP_LABEL. If the condition cannot be understood, or is an inequality floating-point comparison which needs to be reversed, 0 will be returned.

If EARLIEST is nonzero, it is a pointer to a place where the earliest insn used in locating the condition was found. If a replacement test of the condition is desired, it should be placed in front of that insn and we will be sure that the inputs are still valid. If EARLIEST is null, the returned condition will be valid at INSN.

If ALLOW_CC_MODE is nonzero, allow the condition returned to be a compare CC mode register.

VALID_AT_INSN_P is the same as for canonicalize_condition.

If this is not a standard conditional jump, we can't parse it.

If this branches to JUMP_LABEL when the condition is false, reverse the condition.

| void get_full_rtx_cost | ( | rtx | x, |

| enum rtx_code | outer, | ||

| int | opno, | ||

| struct full_rtx_costs * | c | ||

| ) |

Fill in the structure C with information about both speed and size rtx costs for X, which is operand OPNO in an expression with code OUTER.

Referenced by init_costs_to_zero().

| enum rtx_code get_index_code | ( | ) |

Return the "index code" of INFO, in the form required by ok_for_base_p_1.

| HOST_WIDE_INT get_index_scale | ( | ) |

Return the scale applied to *INFO->INDEX_TERM, or 0 if the index is more complicated than that.

|

static |

If *INNER can be interpreted as an index, return a pointer to the inner term (see address_info). Return null otherwise.

At present, only constant scales are allowed.

| HOST_WIDE_INT get_integer_term | ( | ) |

Return the value of the integer term in X, if one is apparent; otherwise return 0. Only obvious integer terms are detected. This is used in cse.c with the `related_value' field.

References CONSTANT_POOL_ADDRESS_P, GET_CODE, GET_MODE_SIZE, get_pool_mode(), int_size_in_bytes(), SYMBOL_REF_DECL, and TREE_TYPE.

| rtx get_related_value | ( | ) |

If X is a constant, return the value sans apparent integer term; otherwise return 0. Only obvious integer terms are detected.

| int in_expr_list_p | ( | ) |

Search LISTP (an EXPR_LIST) for an entry whose first operand is NODE and return 1 if it is found. A simple equality test is used to determine if NODE matches.

| int inequality_comparisons_p | ( | ) |

Return nonzero if X contains a comparison that is not either EQ or NE, i.e., an inequality.

|

static |

Initialize the table NUM_SIGN_BIT_COPIES_IN_REP based on TARGET_MODE_REP_EXTENDED.

Note that we assume that the property of TARGET_MODE_REP_EXTENDED(B, C) is sticky to the integral modes narrower than mode B. I.e., if A is a mode narrower than B then in order to be able to operate on it in mode B, mode A needs to satisfy the requirements set by the representation of mode B.

Currently, it is assumed that TARGET_MODE_REP_EXTENDED

extends to the next widest mode.

We are in in_mode. Count how many bits outside of mode

have to be copies of the sign-bit. We can only check sign-bit copies starting from the

top-bit. In order to be able to check the bits we

have already seen we pretend that subsequent bits

have to be sign-bit copies too.

References binary_scale_code_p(), CONSTANT_P, GET_CODE, MEM_P, REG_P, strip_address_mutations(), and XEXP.

| void init_rtlanal | ( | void | ) |

Initialize non_rtx_starting_operands, which is used to speed up for_each_rtx.

| int insn_rtx_cost | ( | ) |

Calculate the rtx_cost of a single instruction. A return value of zero indicates an instruction pattern without a known cost.

Extract the single set rtx from the instruction pattern. We can't use single_set since we only have the pattern.

|

static |

Return true if KIND is an integer REG_NOTE.

Referenced by find_constant_src().

| bool keep_with_call_p | ( | ) |

Return true if we should avoid inserting code between INSN and preceding call instruction.

There may be a stack pop just after the call and before the store of the return register. Search for the actual store when deciding if we can break or not.

This CONST_CAST is okay because next_nonnote_insn just

returns its argument and we assign it to a const_rtx

variable.

| bool label_is_jump_target_p | ( | ) |

Return true if LABEL is a target of JUMP_INSN. This applies only to non-complex jumps. That is, direct unconditional, conditional, and tablejumps, but not computed jumps or returns. It also does not apply to the fallthru case of a conditional jump.

| int loc_mentioned_in_p | ( | ) |

Return nonzero if IN contains a piece of rtl that has the address LOC.

| int low_bitmask_len | ( | ) |

If M is a bitmask that selects a field of low-order bits within an item but not the entire word, return the length of the field. Return -1 otherwise. M is used in machine mode MODE.

|

static |

Return true if X is a sign_extract or zero_extract from the least significant bit.

References address_info::addr_outer_code, address_info::as, decompose_address(), address_info::mode, and address_info::outer.

| int may_trap_or_fault_p | ( | ) |

Same as above, but additionally return nonzero if evaluating rtx X might cause a fault. We define a fault for the purpose of this function as a erroneous execution condition that cannot be encountered during the normal execution of a valid program; the typical example is an unaligned memory access on a strict alignment machine. The compiler guarantees that it doesn't generate code that will fault from a valid program, but this guarantee doesn't mean anything for individual instructions. Consider the following example:

struct S { int d; union { char *cp; int *ip; }; };

int foo(struct S *s) { if (s->d == 1) return *s->ip; else return *s->cp; }

on a strict alignment machine. In a valid program, foo will never be invoked on a structure for which d is equal to 1 and the underlying unique field of the union not aligned on a 4-byte boundary, but the expression *s->ip might cause a fault if considered individually.

At the RTL level, potentially problematic expressions will almost always verify may_trap_p; for example, the above dereference can be emitted as (mem:SI (reg:P)) and this expression is may_trap_p for a generic register. However, suppose that foo is inlined in a caller that causes s->cp to point to a local character variable and guarantees that s->d is not set to 1; foo may have been effectively translated into pseudo-RTL as:

if ((reg:SI) == 1) (set (reg:SI) (mem:SI (fp - 7))) else (set (reg:QI) (mem:QI (fp - 7)))

Now (mem:SI (fp - 7)) is considered as not may_trap_p since it is a memory reference to a stack slot, but it will certainly cause a fault on a strict alignment machine.

| int may_trap_p | ( | ) |

Return nonzero if evaluating rtx X might cause a trap.

References gcc_assert, GET_MODE, simplify_unary_operation(), and XEXP.

| int may_trap_p_1 | ( | ) |

Return nonzero if evaluating rtx X might cause a trap. FLAGS controls how to consider MEMs. A nonzero means the context of the access may have changed from the original, such that the address may have become invalid.

We make no distinction currently, but this function is part of the internal target-hooks ABI so we keep the parameter as "unsigned flags".

Handle these cases quickly.

Memory ref can trap unless it's a static var or a stack slot.

Recognize specific pattern of stack checking probes.

Division by a non-constant might trap.

An EXPR_LIST is used to represent a function call. This

certainly may trap. Some floating point comparisons may trap.

??? There is no machine independent way to check for tests that trap

when COMPARE is used, though many targets do make this distinction.

For instance, sparc uses CCFPE for compares which generate exceptions

and CCFP for compares which do not generate exceptions. But often the compare has some CC mode, so check operand

modes as well. Often comparison is CC mode, so check operand modes.

Conversion of floating point might trap.

These operations don't trap even with floating point.

Any floating arithmetic may trap.

| int modified_between_p | ( | ) |

Similar to reg_set_between_p, but check all registers in X. Return 0 only if none of them are modified between START and END. Return 1 if X contains a MEM; this routine does use memory aliasing.

| int modified_in_p | ( | ) |

Similar to reg_set_p, but check all registers in X. Return 0 only if none of them are modified in INSN. Return 1 if X contains a MEM; this routine does use memory aliasing.

| int multiple_sets | ( | ) |

Given an INSN, return nonzero if it has more than one SET, else return zero.

INSN must be an insn.

Only a PARALLEL can have multiple SETs.

If we have already found a SET, then return now.

Either zero or one SET.

References COND_EXEC_CODE, find_reg_note(), GET_CODE, INSN_CODE, NOOP_MOVE_INSN_CODE, NULL_RTX, PATTERN, SET, set_noop_p(), XVECEXP, and XVECLEN.

| int no_labels_between_p | ( | ) |

Return 1 if in between BEG and END, exclusive of BEG and END, there is no CODE_LABEL insn.

| bool nonzero_address_p | ( | ) |

Return true if X is an address that is known to not be zero.

As in rtx_varies_p, we have to use the actual rtx, not reg number.

All of the virtual frame registers are stack references.

Handle PIC references.

Similar to the above; allow positive offsets. Further, since

auto-inc is only allowed in memories, the register must be a

pointer. Similarly. Further, the offset is always positive.

If it isn't one of the case above, might be zero.

| unsigned HOST_WIDE_INT nonzero_bits | ( | ) |

|

static |

Given an expression, X, compute which bits in X can be nonzero. We don't care about bits outside of those defined in MODE.

For most X this is simply GET_MODE_MASK (GET_MODE (MODE)), but if X is an arithmetic operation, we can do better.

For floating-point and vector values, assume all bits are needed.

If X is wider than MODE, use its mode instead.

Our only callers in this case look for single bit values. So just return the mode mask. Those tests will then be false.

If MODE is wider than X, but both are a single word for both the host and target machines, we can compute this from which bits of the object might be nonzero in its own mode, taking into account the fact that on many CISC machines, accessing an object in a wider mode causes the high-order bits to become undefined. So they are not known to be zero.

Include declared information about alignment of pointers.

??? We don't properly preserve REG_POINTER changes across

pointer-to-integer casts, so we can't trust it except for

things that we know must be pointers. See execute/960116-1.c. If this produces an integer result, we know which bits are set.

Code here used to clear bits outside the mode of X, but that is

now done above. Mind that MODE is the mode the caller wants to look at this

operation in, and not the actual operation mode. We can wind

up with (subreg:DI (gt:V4HI x y)), and we don't have anything

that describes the results of a vector compare. If the sign bit is known clear, this is the same as ZERO_EXTEND.

Otherwise, show all the bits in the outer mode but not the inner

may be nonzero. Don't call nonzero_bits for the second time if it cannot change

anything. We can apply the rules of arithmetic to compute the number of

high- and low-order zero bits of these operations. We start by

computing the width (position of the highest-order nonzero bit)

and the number of low-order zero bits for each value. If this is a SUBREG formed for a promoted variable that has

been zero-extended, we know that at least the high-order bits

are zero, though others might be too. If the inner mode is a single word for both the host and target

machines, we can compute this from which bits of the inner

object might be nonzero. On many CISC machines, accessing an object in a wider mode

causes the high-order bits to become undefined. So they are

not known to be zero. The nonzero bits are in two classes: any bits within MODE

that aren't in GET_MODE (x) are always significant. The rest of the

nonzero bits are those that are significant in the operand of

the shift when shifted the appropriate number of bits. This

shows that high-order bits are cleared by the right shift and

low-order bits by left shifts. If the sign bit may have been nonzero before the shift, we

need to mark all the places it could have been copied to

by the shift as possibly nonzero. This is at most the number of bits in the mode.

If CLZ has a known value at zero, then the nonzero bits are

that value, plus the number of bits in the mode minus one. If CTZ has a known value at zero, then the nonzero bits are

that value, plus the number of bits in the mode minus one. This is at most the number of bits in the mode minus 1.

Don't call nonzero_bits for the second time if it cannot change

anything.

| int noop_move_p | ( | ) |

Return nonzero if an insn consists only of SETs, each of which only sets a value to itself.

Insns carrying these notes are useful later on.

Check the code to be executed for COND_EXEC.

If nothing but SETs of registers to themselves,

this insn can also be deleted.

Call FUN on each register or MEM that is stored into or clobbered by X. (X would be the pattern of an insn). DATA is an arbitrary pointer, ignored by note_stores, but passed to FUN.

FUN receives three arguments:

- the REG, MEM, CC0 or PC being stored in or clobbered,

- the SET or CLOBBER rtx that does the store,

- the pointer DATA provided to note_stores.

If the item being stored in or clobbered is a SUBREG of a hard register, the SUBREG will be passed.

If we have a PARALLEL, SET_DEST is a list of EXPR_LIST expressions, each of whose first operand is a register.

References GET_CODE, MEM_P, SET_DEST, SET_SRC, and XEXP.

Referenced by df_simulate_one_insn_forwards(), expand_copysign(), get_stored_val(), mark_nonreg_stores_1(), memref_used_between_p(), notice_stack_pointer_modification(), reg_overlap_mentioned_p(), save_call_clobbered_regs(), set_paradoxical_subreg(), and spill_hard_reg().

Like notes_stores, but call FUN for each expression that is being referenced in PBODY, a pointer to the PATTERN of an insn. We only call FUN for each expression, not any interior subexpressions. FUN receives a pointer to the expression and the DATA passed to this function.

Note that this is not quite the same test as that done in reg_referenced_p since that considers something as being referenced if it is being partially set, while we do not.

For sets we replace everything in source plus registers in memory expression in store and operands of a ZERO_EXTRACT.

All the other possibilities never store.

References dead_or_set_regno_p(), END_REGNO, gcc_assert, GET_CODE, REG_P, and REGNO.

Referenced by find_implicit_sets().

| unsigned int num_sign_bit_copies | ( | ) |

|

static |

Return the number of bits at the high-order end of X that are known to be equal to the sign bit. X will be used in mode MODE; if MODE is VOIDmode, X will be used in its own mode. The returned value will always be between 1 and the number of bits in MODE.

If we weren't given a mode, use the mode of X. If the mode is still VOIDmode, we don't know anything. Likewise if one of the modes is floating-point.

For a smaller object, just ignore the high bits.

If this machine does not do all register operations on the entire

register and MODE is wider than the mode of X, we can say nothing

at all about the high-order bits. Else, use nonzero_bits to guess num_sign_bit_copies (see below).

If the constant is negative, take its 1's complement and remask.

Then see how many zero bits we have. If this is a SUBREG for a promoted object that is sign-extended

and we are looking at it in a wider mode, we know that at least the

high-order bits are known to be sign bit copies. For a smaller object, just ignore the high bits.

For a smaller object, just ignore the high bits.

If we are rotating left by a number of bits less than the number

of sign bit copies, we can just subtract that amount from the

number. In general, this subtracts one sign bit copy. But if the value

is known to be positive, the number of sign bit copies is the

same as that of the input. Finally, if the input has just one bit

that might be nonzero, all the bits are copies of the sign bit. Logical operations will preserve the number of sign-bit copies.

MIN and MAX operations always return one of the operands. If num1 is clearing some of the top bits then regardless of

the other term, we are guaranteed to have at least that many

high-order zero bits. Similarly for IOR when setting high-order bits.

For addition and subtraction, we can have a 1-bit carry. However,

if we are subtracting 1 from a positive number, there will not

be such a carry. Furthermore, if the positive number is known to

be 0 or 1, we know the result is either -1 or 0. The number of bits of the product is the sum of the number of

bits of both terms. However, unless one of the terms if known

to be positive, we must allow for an additional bit since negating

a negative number can remove one sign bit copy. The result must be <= the first operand. If the first operand

has the high bit set, we know nothing about the number of sign

bit copies. The result must be <= the second operand. If the second operand

has (or just might have) the high bit set, we know nothing about

the number of sign bit copies. Similar to unsigned division, except that we have to worry about

the case where the divisor is negative, in which case we have

to add 1. Shifts by a constant add to the number of bits equal to the

sign bit. Left shifts destroy copies.

If the constant is negative, take its 1's complement and remask.

Then see how many zero bits we have. If we haven't been able to figure it out by one of the above rules, see if some of the high-order bits are known to be zero. If so, count those bits and return one less than that amount. If we can't safely compute the mask for this mode, always return BITWIDTH.

| bool offset_within_block_p | ( | ) |

Return true if SYMBOL is a SYMBOL_REF and OFFSET + SYMBOL points to somewhere in the same object or object_block as SYMBOL.

References CONST_INT_P, GET_CODE, and XEXP.

|

static |

Helper function for noticing stores to parameter registers.

| void record_hard_reg_sets | ( | ) |

| void record_hard_reg_uses | ( | ) |

Like record_hard_reg_sets, but called through note_uses.

References set_of_data::found, and INSN_P.

|

static |

A for_each_rtx subroutine of record_hard_reg_uses.

Return nonzero if register in range [REGNO, ENDREGNO) appears either explicitly or implicitly in X other than being stored into.

References contained within the substructure at LOC do not count. LOC may be zero, meaning don't ignore anything.

The contents of a REG_NONNEG note is always zero, so we must come here upon repeat in case the last REG_NOTE is a REG_NONNEG note.

If we modifying the stack, frame, or argument pointer, it will

clobber a virtual register. In fact, we could be more precise,

but it isn't worth it. If this is a SUBREG of a hard reg, we can see exactly which

registers are being modified. Otherwise, handle normally. Note setting a SUBREG counts as referring to the REG it is in for

a pseudo but not for hard registers since we can

treat each word individually. X does not match, so try its subexpressions.

Referenced by use_crosses_set_p().

| int reg_mentioned_p | ( | ) |

Nonzero if register REG appears somewhere within IN. Also works if REG is not a register; in this case it checks for a subexpression of IN that is Lisp "equal" to REG.

Compare registers by number.

These codes have no constituent expressions

and are unique. These are kept unique for a given value.

References reg_mentioned_p(), XEXP, XVECEXP, and XVECLEN.

| int reg_overlap_mentioned_p | ( | ) |

Nonzero if modifying X will affect IN. If X is a register or a SUBREG, we check if any register number in X conflicts with the relevant register numbers. If X is a constant, return 0. If X is a MEM, return 1 iff IN contains a MEM (we don't bother checking for memory addresses that can't conflict because we expect this to be a rare case.

If either argument is a constant, then modifying X can not affect IN. Here we look at IN, we can profitably combine CONSTANT_P (x) with the switch statement below.

Overly conservative.

If any register in here refers to it we return true.

References COND_EXEC_CODE, GET_CODE, note_stores(), REG_P, REGNO, SET, SET_DEST, SUBREG_REG, XEXP, and XVECEXP.

| int reg_referenced_p | ( | ) |

Nonzero if the old value of X, a register, is referenced in BODY. If X is entirely replaced by a new value and the only use is as a SET_DEST, we do not consider it a reference.

If the destination is anything other than CC0, PC, a REG or a SUBREG of a REG that occupies all of the REG, the insn references X if it is mentioned in the destination.

| int reg_set_between_p | ( | ) |

Nonzero if register REG is set or clobbered in an insn between FROM_INSN and TO_INSN (exclusive of those two).

| int reg_set_p | ( | ) |

Internals of reg_set_between_p.

We can be passed an insn or part of one. If we are passed an insn, check if a side-effect of the insn clobbers REG.

| int reg_used_between_p | ( | ) |

Nonzero if register REG is used in an insn between FROM_INSN and TO_INSN (exclusive of those two).

| rtx regno_use_in | ( | ) |

Searches X for any reference to REGNO, returning the rtx of the reference found if any. Otherwise, returns NULL_RTX.

| void remove_node_from_expr_list | ( | ) |

Search LISTP (an EXPR_LIST) for an entry whose first operand is NODE and remove that entry from the list if it is found.

A simple equality test is used to determine if NODE matches.

Splice the node out of the list.

| void remove_note | ( | ) |

Remove register note NOTE from the REG_NOTES of INSN.

References CASE_CONST_ANY, GET_CODE, MEM_VOLATILE_P, and RTX_CODE.

| void remove_reg_equal_equiv_notes | ( | ) |

Remove REG_EQUAL and/or REG_EQUIV notes if INSN has such notes.

| void remove_reg_equal_equiv_notes_for_regno | ( | ) |

Remove all REG_EQUAL and REG_EQUIV notes referring to REGNO.

This loop is a little tricky. We cannot just go down the chain because it is being modified by some actions in the loop. So we just iterate over the head. We plan to drain the list anyway.

This assert is generally triggered when someone deletes a REG_EQUAL

or REG_EQUIV note by hacking the list manually rather than calling

remove_note.

References volatile_insn_p(), XVECEXP, and XVECLEN.

| int replace_label | ( | ) |

Replace occurrences of the old label in *X with the new one. DATA is a REPLACE_LABEL_DATA containing the old and new labels.

Create a copy of constant C; replace the label inside

but do not update LABEL_NUSES because uses in constant pool

are not counted.

Add the new constant NEW_C to constant pool and replace

the old reference to constant by new reference. If this is a JUMP_INSN, then we also need to fix the JUMP_LABEL

field. This is not handled by for_each_rtx because it doesn't

handle unprinted ('0') fields.

References computed_jump_p_1(), XEXP, XVECEXP, and XVECLEN.

| rtx replace_rtx | ( | ) |

Replace any occurrence of FROM in X with TO. The function does not enter into CONST_DOUBLE for the replace.

Note that copying is not done so X must not be shared unless all copies are to be modified.

Allow this function to make replacements in EXPR_LISTs.

References ANY_RETURN_P, gcc_assert, JUMP_LABEL, JUMP_P, JUMP_TABLE_DATA_P, next_active_insn(), NEXT_INSN, NULL_RTX, and table.

| int rtx_addr_can_trap_p | ( | ) |

Return nonzero if the use of X as an address in a MEM can cause a trap.

|

static |

Return nonzero if the use of X as an address in a MEM can cause a trap. MODE is the mode of the MEM (not that of X) and UNALIGNED_MEMS controls whether nonzero is returned for unaligned memory accesses on strict alignment machines.

If the size of the access or of the symbol is unknown,

assume the worst.

Else check that the access is in bounds. TODO: restructure

expr_size/tree_expr_size/int_expr_size and just use the latter. As in rtx_varies_p, we have to use the actual rtx, not reg number.

The arg pointer varies if it is not a fixed register.

All of the virtual frame registers are stack references.

An address is assumed not to trap if:

- it is the pic register plus a constant. - or it is an address that can't trap plus a constant integer,

with the proper remainder modulo the mode size if we are

considering unaligned memory references. If it isn't one of the case above, it can cause a trap.

Referenced by volatile_refs_p().

| bool rtx_addr_varies_p | ( | ) |

Return 1 if X refers to a memory location whose address cannot be compared reliably with constant addresses, or if X refers to a BLKmode memory object. FOR_ALIAS is nonzero if we are called from alias analysis; if it is zero, we are slightly more conservative.

References GET_CODE, INSN_P, MEM_P, NULL_RTX, PATTERN, SET, SET_SRC, XEXP, and XVECEXP.

| int rtx_cost | ( | ) |

Return an estimate of the cost of computing rtx X. One use is in cse, to decide which expression to keep in the hash table. Another is in rtl generation, to pick the cheapest way to multiply. Other uses like the latter are expected in the future.

X appears as operand OPNO in an expression with code OUTER_CODE. SPEED specifies whether costs optimized for speed or size should be returned.

A size N times larger than UNITS_PER_WORD likely needs N times as many insns, taking N times as long.

Compute the default costs of certain things. Note that targetm.rtx_costs can override the defaults.

Multiplication has time-complexity O(N*N), where N is the

number of units (translated from digits) when using

schoolbook long multiplication. Similarly, complexity for schoolbook long division.

Used in combine.c as a marker.

A SET doesn't have a mode, so let's look at the SET_DEST to get

the mode for the factor. Pass through.

If we can't tie these modes, make this expensive. The larger

the mode, the more expensive it is. Sum the costs of the sub-rtx's, plus cost of this operation, which is already in total.

| int rtx_referenced_p | ( | ) |

Return true if X is referenced in BODY.

|

static |

|

static |

When *BODY is equal to X or X is directly referenced by *BODY return nonzero, thus FOR_EACH_RTX stops traversing and returns nonzero too, otherwise FOR_EACH_RTX continues traversing *BODY.

Return true if a label_ref *BODY refers to label Y.

If *BODY is a reference to pool constant traverse the constant.

By default, compare the RTL expressions.

| int rtx_unstable_p | ( | ) |

Return 1 if the value of X is unstable (would be different at a different point in the program). The frame pointer, arg pointer, etc. are considered stable (within one function) and so is anything marked `unchanging'.

As in rtx_varies_p, we have to use the actual rtx, not reg number.

The arg pointer varies if it is not a fixed register.

??? When call-clobbered, the value is stable modulo the restore

that must happen after a call. This currently screws up local-alloc

into believing that the restore is not needed. Fall through.

References arg_pointer_rtx, CASE_CONST_ANY, fixed_regs, frame_pointer_rtx, hard_frame_pointer_rtx, MEM_READONLY_P, MEM_VOLATILE_P, PIC_OFFSET_TABLE_REG_CALL_CLOBBERED, pic_offset_table_rtx, rtx_unstable_p(), and XEXP.

| bool rtx_varies_p | ( | ) |

Return 1 if X has a value that can vary even between two executions of the program. 0 means X can be compared reliably against certain constants or near-constants. FOR_ALIAS is nonzero if we are called from alias analysis; if it is zero, we are slightly more conservative. The frame pointer and the arg pointer are considered constant.

Note that we have to test for the actual rtx used for the frame

and arg pointers and not just the register number in case we have

eliminated the frame and/or arg pointer and are using it

for pseudos.

The arg pointer varies if it is not a fixed register.

??? When call-clobbered, the value is stable modulo the restore

that must happen after a call. This currently screws up

local-alloc into believing that the restore is not needed, so we

must return 0 only if we are called from alias analysis. The operand 0 of a LO_SUM is considered constant

(in fact it is related specifically to operand 1)

during alias analysis. Fall through.

References arg_pointer_rtx, CASE_CONST_ANY, fixed_regs, frame_pointer_rtx, hard_frame_pointer_rtx, MEM_READONLY_P, MEM_VOLATILE_P, PIC_OFFSET_TABLE_REG_CALL_CLOBBERED, pic_offset_table_rtx, rtx_varies_p(), and XEXP.

|

static |

Set the base part of address INFO to LOC, given that INNER is the unmutated value.

|

static |

Set the displacement part of address INFO to LOC, given that INNER is the constant term.

|

static |

Set the index part of address INFO to LOC, given that INNER is the unmutated value.

|

static |

Set the segment part of address INFO to LOC, given that INNER is the unmutated value.

| int set_noop_p | ( | ) |

Return nonzero if the destination of SET equals the source and there are no side effects.

| const_rtx set_of | ( | ) |

Give an INSN, return a SET or CLOBBER expression that does modify PAT (either directly or via STRICT_LOW_PART and similar modifiers).

Analyze RTL for GNU compiler. Copyright (C) 1987-2013 Free Software Foundation, Inc.

This file is part of GCC.

GCC is free software; you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation; either version 3, or (at your option) any later version.

GCC is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

You should have received a copy of the GNU General Public License along with GCC; see the file COPYING3. If not see http://www.gnu.org/licenses/. Forward declarations

|

static |

References GET_MODE, hard_regno_nregs, REGNO, and SET_HARD_REG_BIT.

| int side_effects_p | ( | ) |

Similar to above, except that it also rejects register pre- and post- incrementing.

Reject CLOBBER with a non-VOID mode. These are made by combine.c when some combination can't be done. If we see one, don't think that we can simplify the expression.

Recursively scan the operands of this expression.

| int simplify_subreg_regno | ( | unsigned int | xregno, |

| enum machine_mode | xmode, | ||

| unsigned int | offset, | ||

| enum machine_mode | ymode | ||

| ) |

Return the number of a YMODE register to which

(subreg:YMODE (reg:XMODE XREGNO) OFFSET)

can be simplified. Return -1 if the subreg can't be simplified.

XREGNO is a hard register number.

We shouldn't simplify stack-related registers.

We should convert hard stack register in LRA if it is

possible. Try to get the register offset.

Make sure that the offsetted register value is in range.

See whether (reg:YMODE YREGNO) is valid. ??? We allow invalid registers if (reg:XMODE XREGNO) is also invalid. This is a kludge to work around how complex FP arguments are passed on IA-64 and should be fixed. See PR target/49226.

Referenced by resolve_reg_notes().

| rtx single_set_2 | ( | ) |

Given an INSN, return a SET expression if this insn has only a single SET. It may also have CLOBBERs, USEs, or SET whose output will not be used, which we ignore.

We can consider insns having multiple sets, where all but one are dead as single set insns. In common case only single set is present in the pattern so we want to avoid checking for REG_UNUSED notes unless necessary.

When we reach set first time, we just expect this is the single set we are looking for and only when more sets are found in the insn, we check them.

| void split_const | ( | ) |

Split X into a base and a constant offset, storing them in *BASE_OUT and *OFFSET_OUT respectively.

| void split_double | ( | ) |

Split up a CONST_DOUBLE or integer constant rtx into two rtx's for single words, storing in *FIRST the word that comes first in memory in the target and in *SECOND the other.

In this case the CONST_INT holds both target words.

Extract the bits from it into two word-sized pieces.

Sign extend each half to HOST_WIDE_INT.

Set sign_bit to the most significant bit of a word.

Set mask so that all bits of the word are set. We could

have used 1 << BITS_PER_WORD instead of basing the

calculation on sign_bit. However, on machines where

HOST_BITS_PER_WIDE_INT == BITS_PER_WORD, it could cause a

compiler warning, even though the code would never be

executed. Set sign_extend as any remaining bits.

Pick the lower word and sign-extend it.

Pick the higher word, shifted to the least significant

bits, and sign-extend it. Store the words in the target machine order.

The rule for using CONST_INT for a wider mode

is that we regard the value as signed.

So sign-extend it. This is the old way we did CONST_DOUBLE integers.

In an integer, the words are defined as most and least significant.

So order them by the target's convention. Note, this converts the REAL_VALUE_TYPE to the target's

format, splits up the floating point double and outputs

exactly 32 bits of it into each of l[0] and l[1] –

not necessarily BITS_PER_WORD bits. If 32 bits is an entire word for the target, but not for the host,

then sign-extend on the host so that the number will look the same

way on the host that it would on the target. See for instance

simplify_unary_operation. The #if is needed to avoid compiler

warnings.

References HARD_REGISTER_P, MEM_P, MEM_POINTER, ok_for_base_p_1(), REG_P, REG_POINTER, and REGNO.

| rtx* strip_address_mutations | ( | ) |

Strip outer address "mutations" from LOC and return a pointer to the inner value. If OUTER_CODE is nonnull, store the code of the innermost stripped expression there.

"Mutations" either convert between modes or apply some kind of extension, truncation or alignment.

Things like SIGN_EXTEND, ZERO_EXTEND and TRUNCATE can be

used to convert between pointer sizes.

A [SIGN|ZERO]_EXTRACT from the least significant bit effectively

acts as a combined truncation and extension. (and ... (const_int -X)) is used to align to X bytes.

(subreg (operator ...) ...) inside and is used for mode

conversion too.

| void subreg_get_info | ( | unsigned int | xregno, |

| enum machine_mode | xmode, | ||

| unsigned int | offset, | ||

| enum machine_mode | ymode, | ||

| struct subreg_info * | info | ||

| ) |

Fill in information about a subreg of a hard register. xregno - A regno of an inner hard subreg_reg (or what will become one). xmode - The mode of xregno. offset - The byte offset. ymode - The mode of a top level SUBREG (or what may become one). info - Pointer to structure to fill in.

If there are holes in a non-scalar mode in registers, we expect that it is made up of its units concatenated together.

You can only ask for a SUBREG of a value with holes in the middle

if you don't cross the holes. (Such a SUBREG should be done by

picking a different register class, or doing it in memory if

necessary.) An example of a value with holes is XCmode on 32-bit

x86 with -m128bit-long-double; it's represented in 6 32-bit registers,

3 for each part, but in memory it's two 128-bit parts.

Padding is assumed to be at the end (not necessarily the 'high part')

of each unit. Paradoxical subregs are otherwise valid.

If this is a big endian paradoxical subreg, which uses more

actual hard registers than the original register, we must

return a negative offset so that we find the proper highpart

of the register. If registers store different numbers of bits in the different modes, we cannot generally form this subreg.

Lowpart subregs are otherwise valid.

This should always pass, otherwise we don't know how to verify the constraint. These conditions may be relaxed but subreg_regno_offset would need to be redesigned.

The XMODE value can be seen as a vector of NREGS_XMODE values. The subreg must represent a lowpart of given field. Compute what field it is.

Size of ymode must not be greater than the size of xmode.

Referenced by invert_exp_1(), and invert_jump_1().

| unsigned int subreg_lsb | ( | ) |

Given a subreg X, return the bit offset where the subreg begins (counting from the least significant bit of the reg).

| unsigned int subreg_lsb_1 | ( | enum machine_mode | outer_mode, |

| enum machine_mode | inner_mode, | ||

| unsigned int | subreg_byte | ||

| ) |

Helper function for subreg_lsb. Given a subreg's OUTER_MODE, INNER_MODE, and SUBREG_BYTE, return the bit offset where the subreg begins (counting from the least significant bit of the operand).

A paradoxical subreg begins at bit position 0.

If the subreg crosses a word boundary ensure that it also begins and ends on a word boundary.

| unsigned int subreg_nregs | ( | ) |

Return the number of registers that a subreg expression refers to.

| unsigned int subreg_nregs_with_regno | ( | ) |

Return the number of registers that a subreg REG with REGNO expression refers to. This is a copy of the rtlanal.c:subreg_nregs changed so that the regno can be passed in.

| bool subreg_offset_representable_p | ( | unsigned int | xregno, |

| enum machine_mode | xmode, | ||

| unsigned int | offset, | ||

| enum machine_mode | ymode | ||

| ) |

This function returns true when the offset is representable via subreg_offset in the given regno. xregno - A regno of an inner hard subreg_reg (or what will become one). xmode - The mode of xregno. offset - The byte offset. ymode - The mode of a top level SUBREG (or what may become one). RETURN - Whether the offset is representable.

References CONST_CAST_RTX, i2, keep_with_call_p(), next_nonnote_insn(), and targetm.

Referenced by validate_subreg().

| unsigned int subreg_regno | ( | ) |

Return the final regno that a subreg expression refers to.

| unsigned int subreg_regno_offset | ( | unsigned int | xregno, |

| enum machine_mode | xmode, | ||

| unsigned int | offset, | ||

| enum machine_mode | ymode | ||

| ) |

This function returns the regno offset of a subreg expression. xregno - A regno of an inner hard subreg_reg (or what will become one). xmode - The mode of xregno. offset - The byte offset. ymode - The mode of a top level SUBREG (or what may become one). RETURN - The regno offset which would be used.

| bool swap_commutative_operands_p | ( | ) |

Return 1 iff it is necessary to swap operands of commutative operation in order to canonicalize expression.

References GET_MODE_SIZE, subreg_info::nregs, and subreg_info::representable_p.

| bool tablejump_p | ( | ) |

If INSN is a tablejump return true and store the label (before jump table) to *LABELP and the jump table to *TABLEP. LABELP and TABLEP may be NULL.

References for_each_rtx_1(), GET_CODE, non_rtx_starting_operands, NULL_RTX, and XVECEXP.

| bool truncated_to_mode | ( | ) |

Suppose that truncation from the machine mode of X to MODE is not a no-op. See if there is anything special about X so that we can assume it already contains a truncated value of MODE.

This register has already been used in MODE without explicit truncation.

See if we already satisfy the requirements of MODE. If yes we can just switch to MODE.

| bool unsigned_reg_p | ( | ) |

Return TRUE if OP is a register or subreg of a register that holds an unsigned quantity. Otherwise, return FALSE.

| void update_address | ( | ) |

Update INFO after a change to the address it describes.

| int volatile_insn_p | ( | ) |

Nonzero if X contains any volatile instructions. These are instructions which may cause unpredictable machine state instructions, and thus no instructions or register uses should be moved or combined across them. This includes only volatile asms and UNSPEC_VOLATILE instructions.

Recursively scan the operands of this expression.

| int volatile_refs_p | ( | ) |

Nonzero if X contains any volatile memory references UNSPEC_VOLATILE operations or volatile ASM_OPERANDS expressions.

Recursively scan the operands of this expression.

References CASE_CONST_ANY, GET_CODE, GET_MODE, MEM_NOTRAP_P, MEM_SIZE, MEM_SIZE_KNOWN_P, MEM_VOLATILE_P, rtx_addr_can_trap_p_1(), stack_pointer_rtx, targetm, and XEXP.

Variable Documentation

|

static |

Offset of the first 'e', 'E' or 'V' operand for each rtx code, or -1 if a code has no such operand.

Referenced by tablejump_p().

|

static |

Truncation narrows the mode from SOURCE mode to DESTINATION mode. If TARGET_MODE_REP_EXTENDED (DESTINATION, DESTINATION_REP) is SIGN_EXTEND then while narrowing we also have to enforce the representation and sign-extend the value to mode DESTINATION_REP.

If the value is already sign-extended to DESTINATION_REP mode we can just switch to DESTINATION mode on it. For each pair of integral modes SOURCE and DESTINATION, when truncating from SOURCE to DESTINATION, NUM_SIGN_BIT_COPIES_IN_REP[SOURCE][DESTINATION] contains the number of high-order bits in SOURCE that have to be copies of the sign-bit so that we can do this mode-switch to DESTINATION.