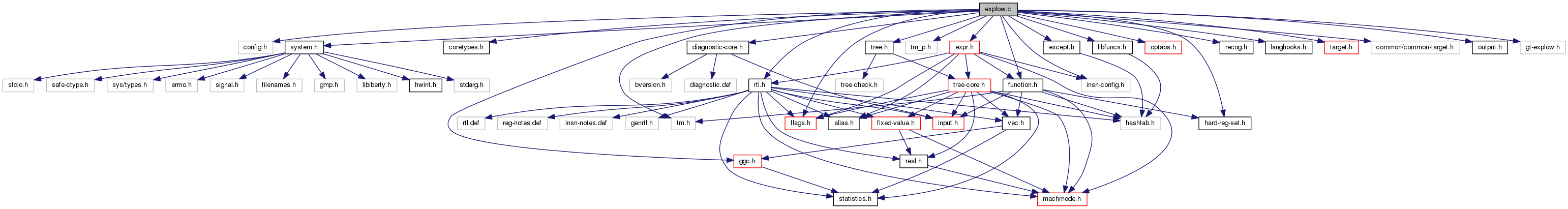

#include "config.h"#include "system.h"#include "coretypes.h"#include "tm.h"#include "diagnostic-core.h"#include "rtl.h"#include "tree.h"#include "tm_p.h"#include "flags.h"#include "except.h"#include "function.h"#include "expr.h"#include "optabs.h"#include "libfuncs.h"#include "hard-reg-set.h"#include "insn-config.h"#include "ggc.h"#include "recog.h"#include "langhooks.h"#include "target.h"#include "common/common-target.h"#include "output.h"#include "gt-explow.h"

Macros | |

| #define | PROBE_INTERVAL (1 << STACK_CHECK_PROBE_INTERVAL_EXP) |

| #define | STACK_GROW_OP PLUS |

| #define | STACK_GROW_OPTAB add_optab |

| #define | STACK_GROW_OFF(off) (off) |

Variables | |

| static bool | suppress_reg_args_size |

| static rtx | stack_check_libfunc |

Macro Definition Documentation

| #define PROBE_INTERVAL (1 << STACK_CHECK_PROBE_INTERVAL_EXP) |

Probe a range of stack addresses from FIRST to FIRST+SIZE, inclusive. FIRST is a constant and size is a Pmode RTX. These are offsets from the current stack pointer. STACK_GROWS_DOWNWARD says whether to add or subtract them from the stack pointer.

| #define STACK_GROW_OFF | ( | off | ) | (off) |

| #define STACK_GROW_OP PLUS |

| #define STACK_GROW_OPTAB add_optab |

Function Documentation

| void adjust_stack | ( | ) |

Adjust the stack pointer by ADJUST (an rtx for a number of bytes). This pops when ADJUST is positive. ADJUST need not be constant.

We expect all variable sized adjustments to be multiple of PREFERRED_STACK_BOUNDARY.

|

static |

A helper for adjust_stack and anti_adjust_stack.

Hereafter anti_p means subtract_p.

Referenced by promote_decl_mode().

| rtx allocate_dynamic_stack_space | ( | rtx | size, |

| unsigned | size_align, | ||

| unsigned | required_align, | ||

| bool | cannot_accumulate | ||

| ) |

Return an rtx representing the address of an area of memory dynamically pushed on the stack.

Any required stack pointer alignment is preserved.

SIZE is an rtx representing the size of the area.

SIZE_ALIGN is the alignment (in bits) that we know SIZE has. This parameter may be zero. If so, a proper value will be extracted from SIZE if it is constant, otherwise BITS_PER_UNIT will be assumed.

REQUIRED_ALIGN is the alignment (in bits) required for the region of memory.

If CANNOT_ACCUMULATE is set to TRUE, the caller guarantees that the stack space allocated by the generated code cannot be added with itself in the course of the execution of the function. It is always safe to pass FALSE here and the following criterion is sufficient in order to pass TRUE: every path in the CFG that starts at the allocation point and loops to it executes the associated deallocation code.

If we're asking for zero bytes, it doesn't matter what we point to since we can't dereference it. But return a reasonable address anyway.

Otherwise, show we're calling alloca or equivalent.

If stack usage info is requested, look into the size we are passed. We need to do so this early to avoid the obfuscation that may be introduced later by the various alignment operations.

Look into the last emitted insn and see if we can deduce

something for the register. If the size is not constant, we can't say anything.

Ensure the size is in the proper mode.

Adjust SIZE_ALIGN, if needed.

Watch out for overflow truncating to "unsigned".

We can't attempt to minimize alignment necessary, because we don't know the final value of preferred_stack_boundary yet while executing this code.

We will need to ensure that the address we return is aligned to REQUIRED_ALIGN. If STACK_DYNAMIC_OFFSET is defined, we don't always know its final value at this point in the compilation (it might depend on the size of the outgoing parameter lists, for example), so we must align the value to be returned in that case. (Note that STACK_DYNAMIC_OFFSET will have a default nonzero value if STACK_POINTER_OFFSET or ACCUMULATE_OUTGOING_ARGS are defined). We must also do an alignment operation on the returned value if the stack pointer alignment is less strict than REQUIRED_ALIGN. If we have to align, we must leave space in SIZE for the hole that might result from the alignment operation.

??? STACK_POINTER_OFFSET is always defined now.

Round the size to a multiple of the required stack alignment. Since the stack if presumed to be rounded before this allocation, this will maintain the required alignment. If the stack grows downward, we could save an insn by subtracting SIZE from the stack pointer and then aligning the stack pointer. The problem with this is that the stack pointer may be unaligned between the execution of the subtraction and alignment insns and some machines do not allow this. Even on those that do, some signal handlers malfunction if a signal should occur between those insns. Since this is an extremely rare event, we have no reliable way of knowing which systems have this problem. So we avoid even momentarily mis-aligning the stack.

The size is supposed to be fully adjusted at this point so record it if stack usage info is requested.

??? This is gross but the only safe stance in the absence

of stack usage oriented flow analysis. If we are splitting the stack, we need to ask the backend whether there is enough room on the current stack. If there isn't, or if the backend doesn't know how to tell is, then we need to call a function to allocate memory in some other way. This memory will be released when we release the current stack segment. The effect is that stack allocation becomes less efficient, but at least it doesn't cause a stack overflow.

The __morestack_allocate_stack_space function will allocate

memory using malloc. If the alignment of the memory returned

by malloc does not meet REQUIRED_ALIGN, we increase SIZE to

make sure we allocate enough space. We ought to be called always on the toplevel and stack ought to be aligned properly.

If needed, check that we have the required amount of stack. Take into account what has already been checked.

Don't let anti_adjust_stack emit notes.

Perform the required allocation from the stack. Some systems do this differently than simply incrementing/decrementing from the stack pointer, such as acquiring the space by calling malloc().

Check stack bounds if necessary.

Even if size is constant, don't modify stack_pointer_delta.

The constant size alloca should preserve

crtl->preferred_stack_boundary alignment. Finish up the split stack handling.

CEIL_DIV_EXPR needs to worry about the addition overflowing,

but we know it can't. So add ourselves and then do

TRUNC_DIV_EXPR. Now that we've committed to a return value, mark its alignment.

Record the new stack level for nonlocal gotos.

References BITS_PER_UNIT, and PREFERRED_STACK_BOUNDARY.

Referenced by initialize_argument_information().

| void anti_adjust_stack | ( | ) |

Adjust the stack pointer by minus ADJUST (an rtx for a number of bytes). This pushes when ADJUST is positive. ADJUST need not be constant.

We expect all variable sized adjustments to be multiple of PREFERRED_STACK_BOUNDARY.

Referenced by fixup_args_size_notes().

| void anti_adjust_stack_and_probe | ( | ) |

Adjust the stack pointer by minus SIZE (an rtx for a number of bytes) while probing it. This pushes when SIZE is positive. SIZE need not be constant. If ADJUST_BACK is true, adjust back the stack pointer by plus SIZE at the end.

We skip the probe for the first interval + a small dope of 4 words and probe that many bytes past the specified size to maintain a protection area at the botton of the stack.

First ensure SIZE is Pmode.

If we have a constant small number of probes to generate, that's the easy case.

Adjust SP and probe at PROBE_INTERVAL + N * PROBE_INTERVAL for

values of N from 1 until it exceeds SIZE. If only one probe is

needed, this will not generate any code. Then adjust and probe

to PROBE_INTERVAL + SIZE. In the variable case, do the same as above, but in a loop. Note that we must be extra careful with variables wrapping around because we might be at the very top (or the very bottom) of the address space and we have to be able to handle this case properly; in particular, we use an equality test for the loop condition.

Step 1: round SIZE to the previous multiple of the interval.

ROUNDED_SIZE = SIZE & -PROBE_INTERVAL

Step 2: compute initial and final value of the loop counter.

SP = SP_0 + PROBE_INTERVAL.

LAST_ADDR = SP_0 + PROBE_INTERVAL + ROUNDED_SIZE.

Step 3: the loop

while (SP != LAST_ADDR)

{

SP = SP + PROBE_INTERVAL

probe at SP

}

adjusts SP and probes at PROBE_INTERVAL + N * PROBE_INTERVAL for

values of N from 1 until it is equal to ROUNDED_SIZE. Jump to END_LAB if SP == LAST_ADDR.

SP = SP + PROBE_INTERVAL and probe at SP.

Step 4: adjust SP and probe at PROBE_INTERVAL + SIZE if we cannot

assert at compile-time that SIZE is equal to ROUNDED_SIZE. TEMP = SIZE - ROUNDED_SIZE.

Manual CSE if the difference is not known at compile-time.

Adjust back and account for the additional first interval.

References gcc_assert, GET_CLASS_NARROWEST_MODE, GET_MODE, GET_MODE_SIZE, GET_MODE_WIDER_MODE, HOST_WIDE_INT, int_size_in_bytes(), PUT_MODE, REG_P, and targetm.

Subroutines for manipulating rtx's in semantically interesting ways. Copyright (C) 1987-2013 Free Software Foundation, Inc.

This file is part of GCC.

GCC is free software; you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation; either version 3, or (at your option) any later version.

GCC is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

You should have received a copy of the GNU General Public License along with GCC; see the file COPYING3. If not see http://www.gnu.org/licenses/.

|

static |

Return a copy of X in which all memory references and all constants that involve symbol refs have been replaced with new temporary registers. Also emit code to load the memory locations and constants into those registers.

If X contains no such constants or memory references, X itself (not a copy) is returned.

If a constant is found in the address that is not a legitimate constant in an insn, it is left alone in the hope that it might be valid in the address.

X may contain no arithmetic except addition, subtraction and multiplication. Values returned by expand_expr with 1 for sum_ok fit this constraint.

| rtx convert_memory_address_addr_space | ( | enum machine_mode | to_mode, |

| rtx | x, | ||

| addr_space_t | as | ||

| ) |

Given X, a memory address in address space AS' pointer mode, convert it to an address in the address space's address mode, or vice versa (TO_MODE says which way). We take advantage of the fact that pointers are not allowed to overflow by commuting arithmetic operations over conversions so that address arithmetic insns can be used.

References CASE_CONST_SCALAR_INT, CONST_INT_P, convert_memory_address_addr_space(), GET_CODE, GET_MODE, GET_MODE_SIZE, LABEL_REF_NONLOCAL_P, PUT_MODE, REG_POINTER, shallow_copy_rtx, simplify_unary_operation(), SUBREG_PROMOTED_VAR_P, SUBREG_REG, and XEXP.

Referenced by addr_expr_of_non_mem_decl_p_1(), and convert_memory_address_addr_space().

| rtx copy_addr_to_reg | ( | ) |

Like copy_to_reg but always give the new register mode Pmode in case X is a constant.

| rtx copy_to_mode_reg | ( | ) |

Like copy_to_reg but always give the new register mode MODE in case X is a constant.

If not an operand, must be an address with PLUS and MULT so do the computation.

Referenced by get_atomic_op_for_code(), get_def_for_expr(), and move_by_pieces().

| rtx copy_to_reg | ( | ) |

Copy the value or contents of X to a new temp reg and return that reg.

If not an operand, must be an address with PLUS and MULT so do the computation.

Referenced by assign_parm_adjust_entry_rtl(), can_compare_and_swap_p(), and expand_builtin_return_addr().

| rtx copy_to_suggested_reg | ( | ) |

| rtx eliminate_constant_term | ( | ) |

If X is a sum, return a new sum like X but lacking any constant terms. Add all the removed constant terms into *CONSTPTR. X itself is not altered. The result != X if and only if it is not isomorphic to X.

First handle constants appearing at this level explicitly.

References XEXP.

| void emit_stack_probe | ( | ) |

Emit one stack probe at ADDRESS, an address within the stack.

See if we have an insn to probe the stack.

| void emit_stack_restore | ( | ) |

Restore the stack pointer for the purpose in SAVE_LEVEL. SA is the save area made by emit_stack_save. If it is zero, we have nothing to do.

The default is that we use a move insn.

If stack_realign_drap, the x86 backend emits a prologue that aligns both STACK_POINTER and HARD_FRAME_POINTER. If stack_realign_fp, the x86 backend emits a prologue that aligns only STACK_POINTER. This renders the HARD_FRAME_POINTER unusable for accessing aligned variables, which is reflected in ix86_can_eliminate. We normally still have the realigned STACK_POINTER that we can use. But if there is a stack restore still present at reload, it can trigger mark_not_eliminable for the STACK_POINTER, leaving no way to eliminate FRAME_POINTER into a hard reg. To prevent this situation, we force need_drap if we emit a stack restore.

See if this machine has anything special to do for this kind of save.

These clobbers prevent the scheduler from moving

references to variable arrays below the code

that deletes (pops) the arrays.

Referenced by expand_null_return_1().

| void emit_stack_save | ( | ) |

Save the stack pointer for the purpose in SAVE_LEVEL. PSAVE is a pointer to a previously-created save area. If no save area has been allocated, this function will allocate one. If a save area is specified, it must be of the proper mode.

The default is that we use a move insn and save in a Pmode object.

See if this machine has anything special to do for this kind of save.

If there is no save area and we have to allocate one, do so. Otherwise verify the save area is the proper mode.

References SAVE_BLOCK, SAVE_FUNCTION, and SAVE_NONLOCAL.

Referenced by initialize_argument_information().

| rtx expr_size | ( | ) |

Return an rtx for the size in bytes of the value of EXP.

Referenced by count_type_elements(), and initialize_argument_information().

| rtx force_not_mem | ( | ) |

If X is a memory ref, copy its contents to a new temp reg and return that reg. Otherwise, return X.

References targetm.

Referenced by expand_value_return().

| rtx force_reg | ( | ) |

Load X into a register if it is not already one. Use mode MODE for the register. X should be valid for mode MODE, but it may be a constant which is valid for all integer modes; that's why caller must specify MODE.

The caller must not alter the value in the register we return, since we mark it as a "constant" register.

Let optimizers know that TEMP's value never changes and that X can be substituted for it. Don't get confused if INSN set something else (such as a SUBREG of TEMP).

Let optimizers know that TEMP is a pointer, and if so, the known alignment of that pointer.

References BITS_PER_UNIT, ctz_hwi(), DECL_ALIGN, DECL_P, INTVAL, MIN, SYMBOL_REF_DECL, and XEXP.

Referenced by expand_mult_highpart_adjust(), extract_split_bit_field(), no_conflict_move_test(), and swap_commutative_operands_with_target().

| rtx hard_function_value | ( | const_tree | valtype, |

| const_tree | func, | ||

| const_tree | fntype, | ||

| int | outgoing | ||

| ) |

Return an rtx representing the register or memory location in which a scalar value of data type VALTYPE was returned by a function call to function FUNC. FUNC is a FUNCTION_DECL, FNTYPE a FUNCTION_TYPE node if the precise function is known, otherwise 0. OUTGOING is 1 if on a machine with register windows this function should return the register in which the function will put its result and 0 otherwise.

int_size_in_bytes can return -1. We don't need a check here

since the value of bytes will then be large enough that no

mode will match anyway.

Have we found a large enough mode?

No suitable mode found.

Referenced by get_next_funcdef_no().

| rtx hard_libcall_value | ( | ) |

Return an rtx representing the register or memory location in which a scalar value of mode MODE was returned by a library call.

| HOST_WIDE_INT int_expr_size | ( | ) |

Return a wide integer for the size in bytes of the value of EXP, or -1 if the size can vary or is larger than an integer.

| rtx memory_address_addr_space | ( | ) |

Return something equivalent to X but valid as a memory address for something of mode MODE in the named address space AS. When X is not itself valid, this works by copying X or subexpressions of it into registers.

By passing constant addresses through registers we get a chance to cse them.

We get better cse by rejecting indirect addressing at this stage. Let the combiner create indirect addresses where appropriate. For now, generate the code so that the subexpressions useful to share are visible. But not if cse won't be done!

At this point, any valid address is accepted.

If it was valid before but breaking out memory refs invalidated it,

use it the old way. Perform machine-dependent transformations on X

in certain cases. This is not necessary since the code

below can handle all possible cases, but machine-dependent

transformations can make better code. PLUS and MULT can appear in special ways

as the result of attempts to make an address usable for indexing.

Usually they are dealt with by calling force_operand, below.

But a sum containing constant terms is special

if removing them makes the sum a valid address:

then we generate that address in a register

and index off of it. We do this because it often makes

shorter code, and because the addresses thus generated

in registers often become common subexpressions. If we have a register that's an invalid address,

it must be a hard reg of the wrong class. Copy it to a pseudo. Last resort: copy the value to a register, since

the register is a valid address. If we didn't change the address, we are done. Otherwise, mark a reg as a pointer if we have REG or REG + CONST_INT.

OLDX may have been the address on a temporary. Update the address to indicate that X is now used.

| rtx plus_constant | ( | ) |

Return an rtx for the sum of X and the integer C, given that X has mode MODE.

Sorry, we have no way to represent overflows this wide. To fix, add constant support wider than CONST_DOUBLE.

If this is a reference to the constant pool, try replacing it with

a reference to a new constant. If the resulting address isn't

valid, don't return it because we have no way to validize it.

If adding to something entirely constant, set a flag

so that we can add a CONST around the result. The interesting case is adding the integer to a sum. Look

for constant term in the sum and combine with C. For an

integer constant term or a constant term that is not an

explicit integer, we combine or group them together anyway.

We may not immediately return from the recursive call here, lest

all_constant gets lost. We need to be careful since X may be shared and we can't

modify it in place.

Referenced by compute_const_anchors(), emit_library_call_value_1(), emit_move_insn_1(), emit_notes_for_differences_2(), get_def_for_expr(), lra_eliminate_regs_1(), maybe_memory_address_addr_space_p(), move_by_pieces(), note_reg_elim_costly(), rtx_for_function_call(), set_decl_origin_self(), set_label_offsets(), setup_elimination_map(), and simplify_plus_minus_op_data_cmp().

| void probe_stack_range | ( | ) |

First ensure SIZE is Pmode.

Next see if we have a function to check the stack.

Next see if we have an insn to check the stack.

Otherwise we have to generate explicit probes. If we have a constant small number of them to generate, that's the easy case.

Probe at FIRST + N * PROBE_INTERVAL for values of N from 1 until

it exceeds SIZE. If only one probe is needed, this will not

generate any code. Then probe at FIRST + SIZE. In the variable case, do the same as above, but in a loop. Note that we must be extra careful with variables wrapping around because we might be at the very top (or the very bottom) of the address space and we have to be able to handle this case properly; in particular, we use an equality test for the loop condition.

Step 1: round SIZE to the previous multiple of the interval.

ROUNDED_SIZE = SIZE & -PROBE_INTERVAL

Step 2: compute initial and final value of the loop counter.

TEST_ADDR = SP + FIRST.

LAST_ADDR = SP + FIRST + ROUNDED_SIZE.

Step 3: the loop

while (TEST_ADDR != LAST_ADDR)

{

TEST_ADDR = TEST_ADDR + PROBE_INTERVAL

probe at TEST_ADDR

}

probes at FIRST + N * PROBE_INTERVAL for values of N from 1

until it is equal to ROUNDED_SIZE. Jump to END_LAB if TEST_ADDR == LAST_ADDR.

TEST_ADDR = TEST_ADDR + PROBE_INTERVAL.

Probe at TEST_ADDR.

Step 4: probe at FIRST + SIZE if we cannot assert at compile-time

that SIZE is equal to ROUNDED_SIZE. TEMP = SIZE - ROUNDED_SIZE.

Use [base + disp} addressing mode if supported.

Manual CSE if the difference is not known at compile-time.

| enum machine_mode promote_decl_mode | ( | ) |

Use one of promote_mode or promote_function_mode to find the promoted mode of DECL. If PUNSIGNEDP is not NULL, store there the unsignedness of DECL after promotion.

References adjust_stack_1(), const0_rtx, CONST_INT_P, INTVAL, and stack_pointer_delta.

Referenced by elim_forward().

| enum machine_mode promote_function_mode | ( | const_tree | type, |

| enum machine_mode | mode, | ||

| int * | punsignedp, | ||

| const_tree | funtype, | ||

| int | for_return | ||

| ) |

Return the mode to use to pass or return a scalar of TYPE and MODE. PUNSIGNEDP points to the signedness of the type and may be adjusted to show what signedness to use on extension operations.

FOR_RETURN is nonzero if the caller is promoting the return value of FNDECL, else it is for promoting args.

Called without a type node for a libcall.

References targetm, TREE_TYPE, and TYPE_ADDR_SPACE.

Referenced by assign_parm_remove_parallels(), initialize_argument_information(), promote_mode(), and resolve_operand_name_1().

| enum machine_mode promote_mode | ( | const_tree | type, |

| enum machine_mode | mode, | ||

| int * | punsignedp | ||

| ) |

Return the mode to use to store a scalar of TYPE and MODE. PUNSIGNEDP points to the signedness of the type and may be adjusted to show what signedness to use on extension operations.

For libcalls this is invoked without TYPE from the backends TARGET_PROMOTE_FUNCTION_MODE hooks. Don't do anything in that case.

FIXME: this is the same logic that was there until GCC 4.4, but we probably want to test POINTERS_EXTEND_UNSIGNED even if PROMOTE_MODE is not defined. The affected targets are M32C, S390, SPARC.

References DECL_MODE, promote_function_mode(), promote_mode(), TREE_CODE, TREE_TYPE, and TYPE_UNSIGNED.

Referenced by compute_argument_block_size(), default_promote_function_mode(), default_promote_function_mode_always_promote(), and promote_mode().

|

static |

Round the size of a block to be pushed up to the boundary required by this machine. SIZE is the desired size, which need not be constant.

If crtl->preferred_stack_boundary might still grow, use virtual_preferred_stack_boundary_rtx instead. This will be substituted by the right value in vregs pass and optimized during combine.

CEIL_DIV_EXPR needs to worry about the addition overflowing, but we know it can't. So add ourselves and then do TRUNC_DIV_EXPR.

| int rtx_to_tree_code | ( | ) |

Look up the tree code for a given rtx code to provide the arithmetic operation for REAL_ARITHMETIC. The function returns an int because the caller may not know what `enum tree_code' means.

| void set_stack_check_libfunc | ( | ) |

References gen_label_rtx().

| HOST_WIDE_INT trunc_int_for_mode | ( | ) |

Truncate and perhaps sign-extend C as appropriate for MODE.

You want to truncate to a <em>what</em>?

Canonicalize BImode to 0 and STORE_FLAG_VALUE.

Sign-extend for the requested mode.

Referenced by find_single_use(), make_extraction(), make_field_assignment(), noce_try_store_flag(), register_operand(), swap_commutative_operands_with_target(), and validate_simplify_insn().

| void update_nonlocal_goto_save_area | ( | void | ) |

Invoke emit_stack_save on the nonlocal_goto_save_area for the current function. This function should be called whenever we allocate or deallocate dynamic stack space.

The nonlocal_goto_save_area object is an array of N pointers. The first one is used for the frame pointer save; the rest are sized by STACK_SAVEAREA_MODE. Create a reference to array index 1, the first of the stack save area slots.

| rtx use_anchored_address | ( | ) |

If X is a memory reference to a member of an object block, try rewriting it to use an anchor instead. Return the new memory reference on success and the old one on failure.

Split the address into a base and offset.

Check whether BASE is suitable for anchors.

Decide where BASE is going to be.

Get the anchor we need to use.

Work out the offset from the anchor.

If we're going to run a CSE pass, force the anchor into a register. We will then be able to reuse registers for several accesses, if the target costs say that that's worthwhile.

Referenced by emit_move_ccmode().

| rtx validize_mem | ( | ) |

Convert a mem ref into one with a valid memory address. Pass through anything else unchanged.

Don't alter REF itself, since that is probably a stack slot.

Referenced by maybe_set_first_label_num(), mem_overlaps_already_clobbered_arg_p(), and seq_cost().

Variable Documentation

|

static |

A front end may want to override GCC's stack checking by providing a run-time routine to call to check the stack, so provide a mechanism for calling that routine.

|

static |

Controls the behaviour of {anti_,}adjust_stack.